Is AI governance only about safety, or should it also control product behavior?

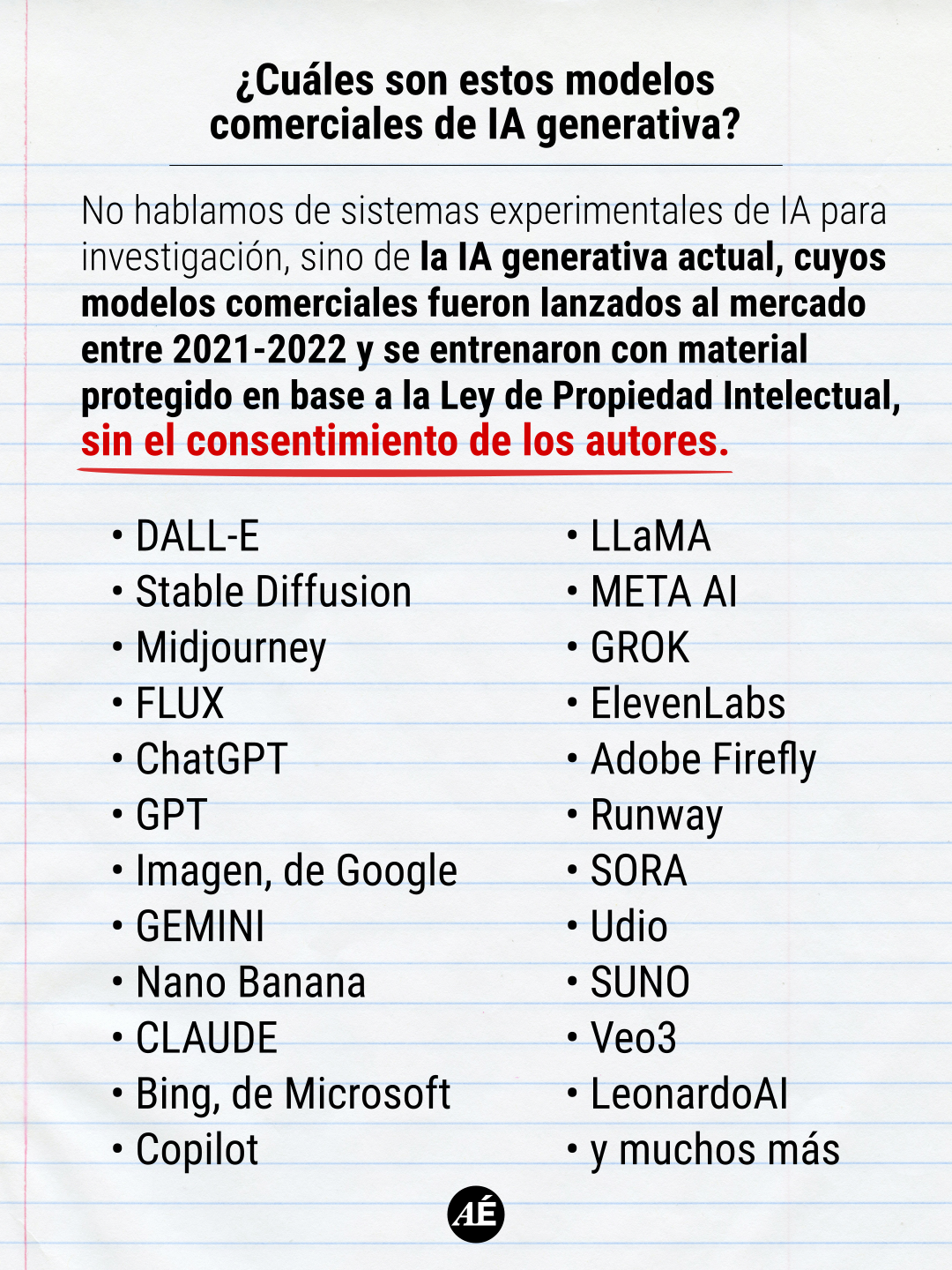

AI governance discussions often focus on safety and compliance, but a new perspective emphasizes controlling the AI's product behavior. This behavioral governance approach aims to ensure an AI consistently acts as intended by the product, managing aspects like identity, memory, and tone. This is crucial for AI products, especially agents, to maintain reliability and user experience beyond just preventing harmful outputs. AI

IMPACT Highlights the need for AI governance to extend beyond safety to encompass product behavior and consistency for better user experience.