Brazil cancels federal tax on imported goods worth $50 or less

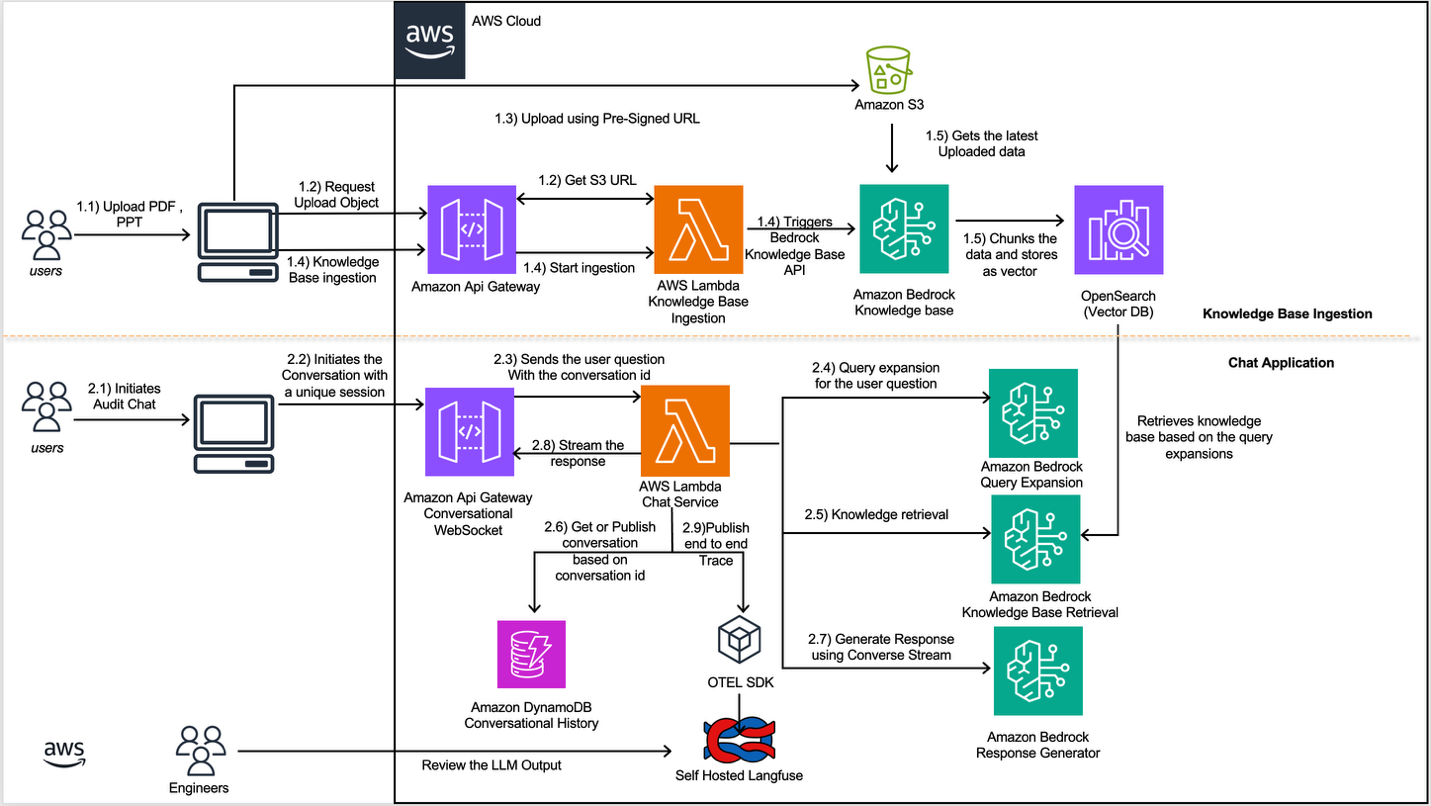

South Korea's KRX stock exchange has integrated AI technology into its capital market surveillance efforts. This move follows KRX's acquisition of local AI startup Fair Labs, aimed at accelerating its AI transformation and bolstering its data operations. The adoption of AI is expected to enhance market monitoring and data analysis capabilities. AI

IMPACT Enhances financial market surveillance and data operations through AI integration.