AI Model Deployment: Strategies for Production LLM Serving

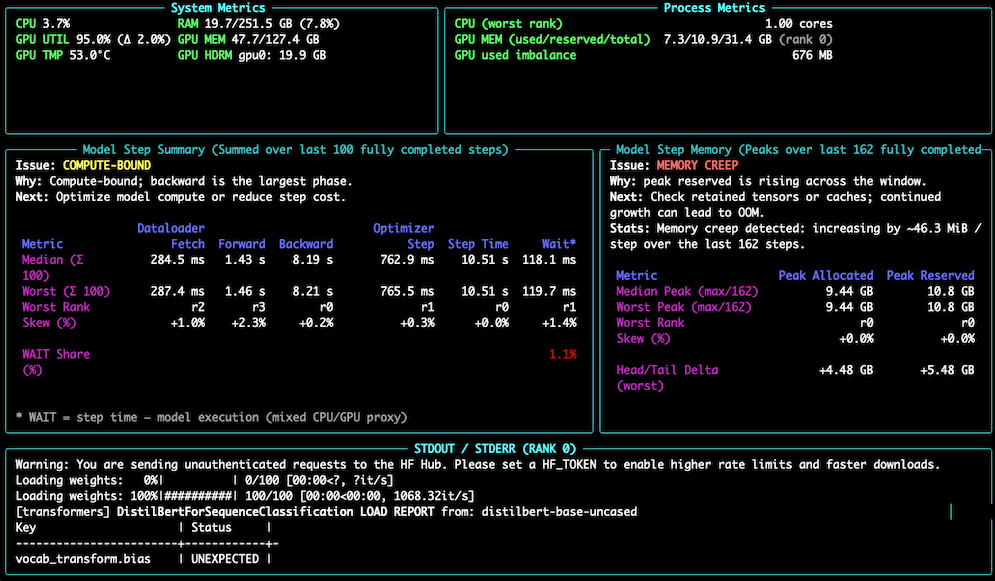

Deploying large language models (LLMs) to production involves specialized infrastructure and optimization techniques due to their unique demands. Options range from managed APIs like OpenAI and Anthropic for simplicity, to self-hosted solutions using frameworks such as vLLM for greater control and cost-efficiency at scale. Key optimization strategies include continuous batching, speculative decoding, and various caching methods to reduce latency and computational costs, all while requiring robust monitoring of performance metrics and GPU resources. AI

IMPACT Provides practical guidance for developers on deploying and optimizing LLMs in production environments.

![[Infrrd.ai] — Tool Attention: Technical Analysis of Eliminating MCP/Tools Tax Through Dynamic Tool Gating and Deferred Schema Loading](https://cdn-images-1.medium.com/max/600/0*4mF849WgN9Gbf5nq)