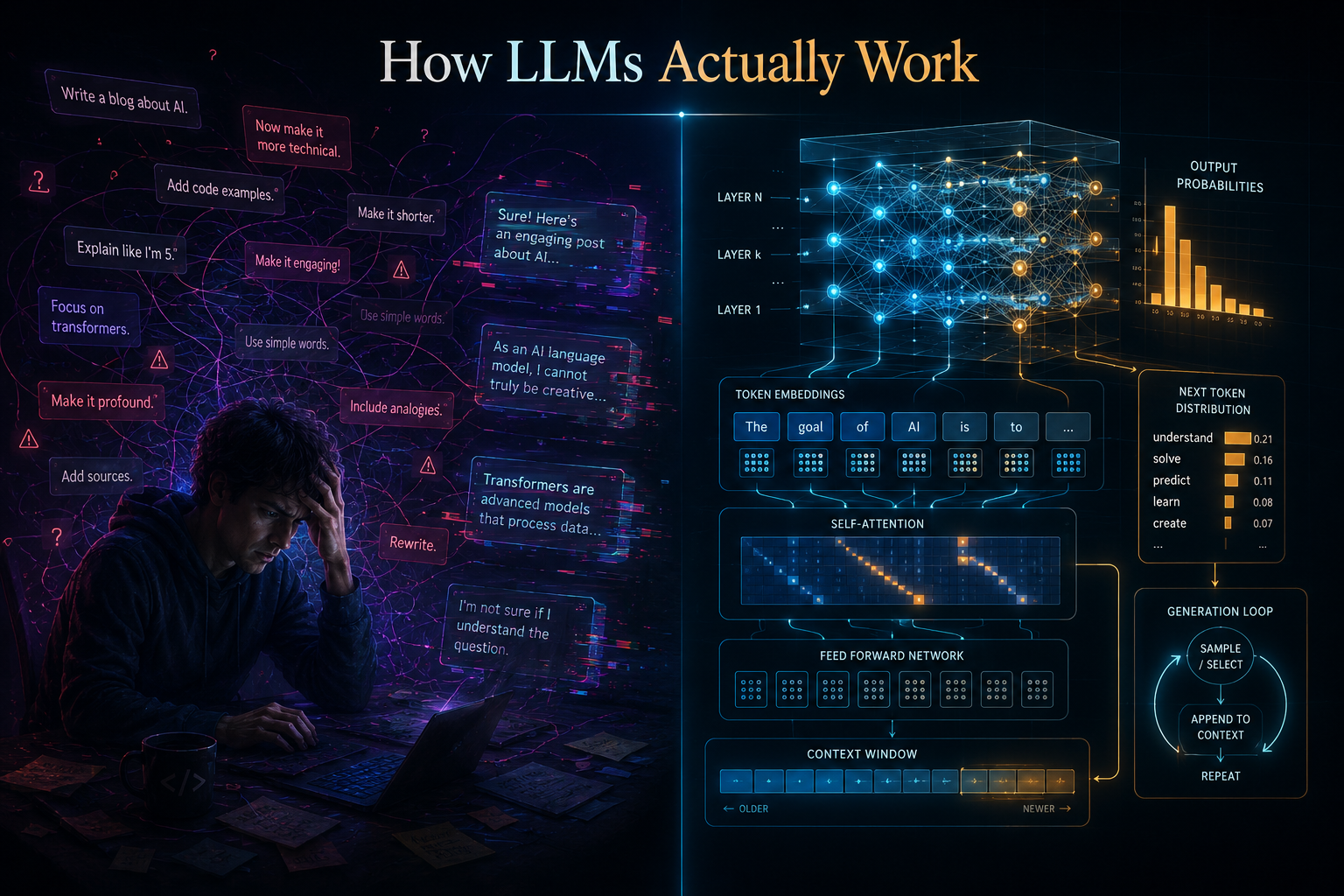

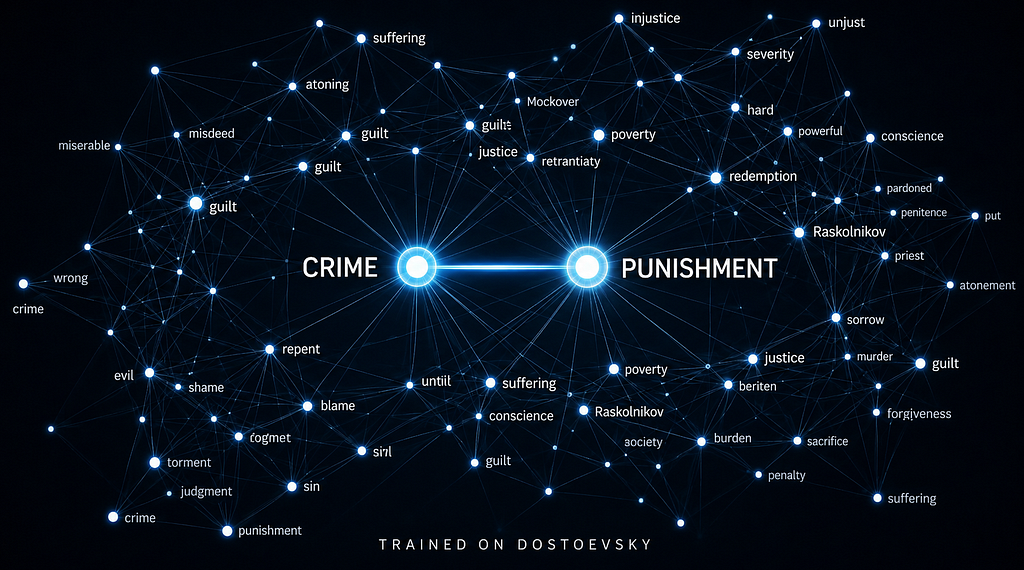

Building an LLM From Scratch: I Trained Word Embeddings on Dostoevsky. Here’s What I Found.

The author details their process of building word embeddings from scratch, using Dostoevsky's novels as a corpus of nearly one million words. This step follows their previous work on character-level tokenization and aims to represent words as dense vectors that capture semantic relationships, moving beyond simple frequency counts. The article explains the mathematical concepts behind embeddings and highlights the limitations of earlier NLP models like one-hot encodings, which struggled with semantic understanding and data sparsity. AI

IMPACT Demonstrates a foundational NLP technique for representing word meaning, crucial for building more sophisticated language models.