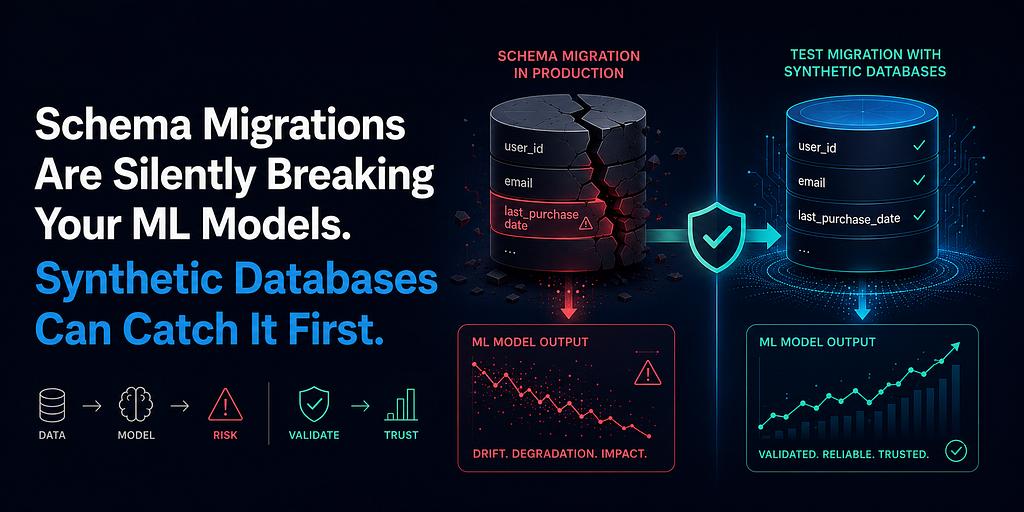

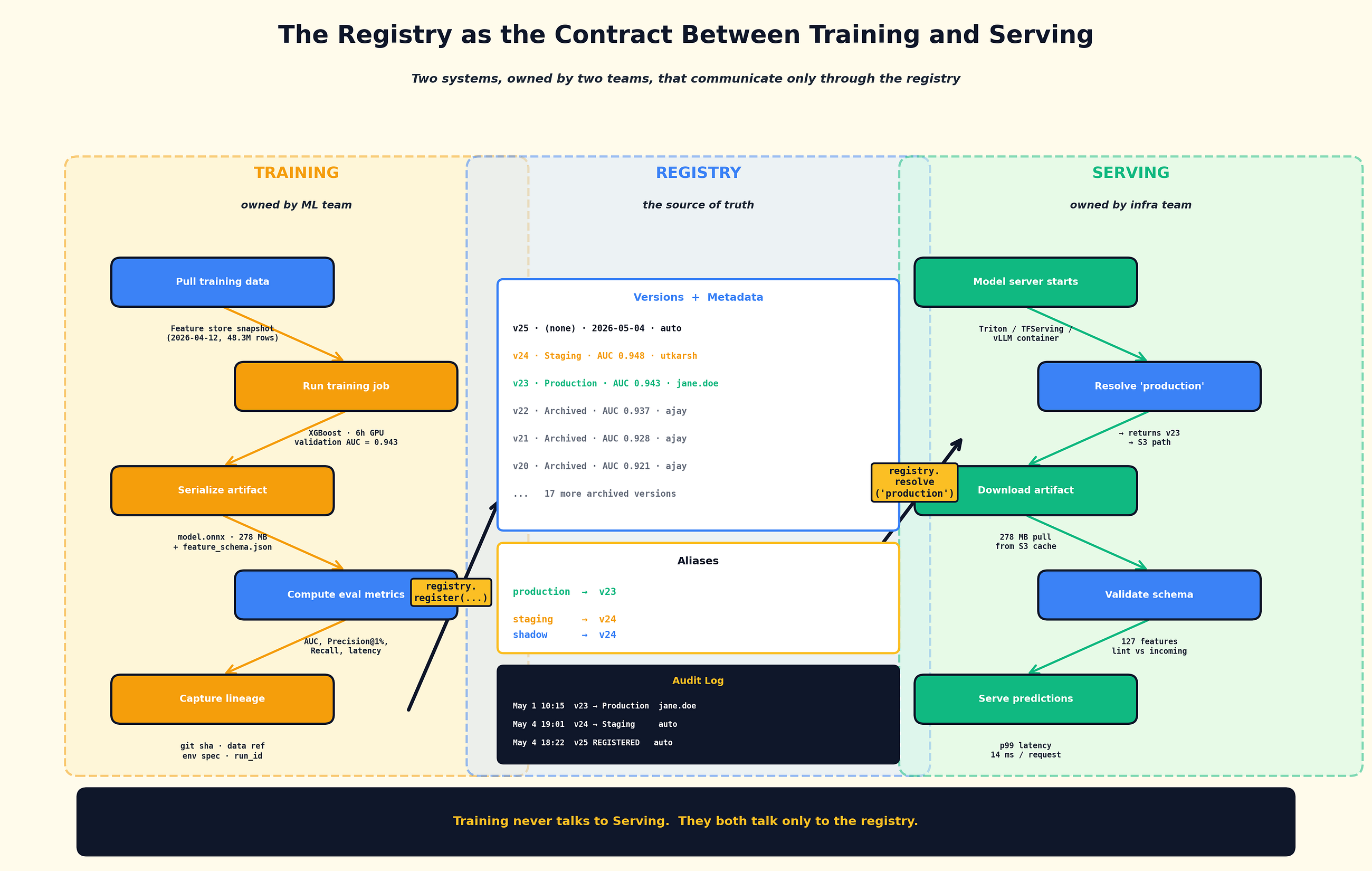

Machine Learning System -Design Model Versioning & the Registry: Why Your S3 Bucket Is Not a Source…

This article discusses the critical need for robust model versioning and registry systems in machine learning development. It argues that simple cloud storage solutions like S3 buckets are insufficient for managing the complexities of ML model lifecycles. The piece emphasizes the importance of dedicated registries for tracking, organizing, and deploying models effectively. AI

IMPACT Highlights the necessity of proper infrastructure for managing ML models, crucial for scalable and reliable AI deployments.