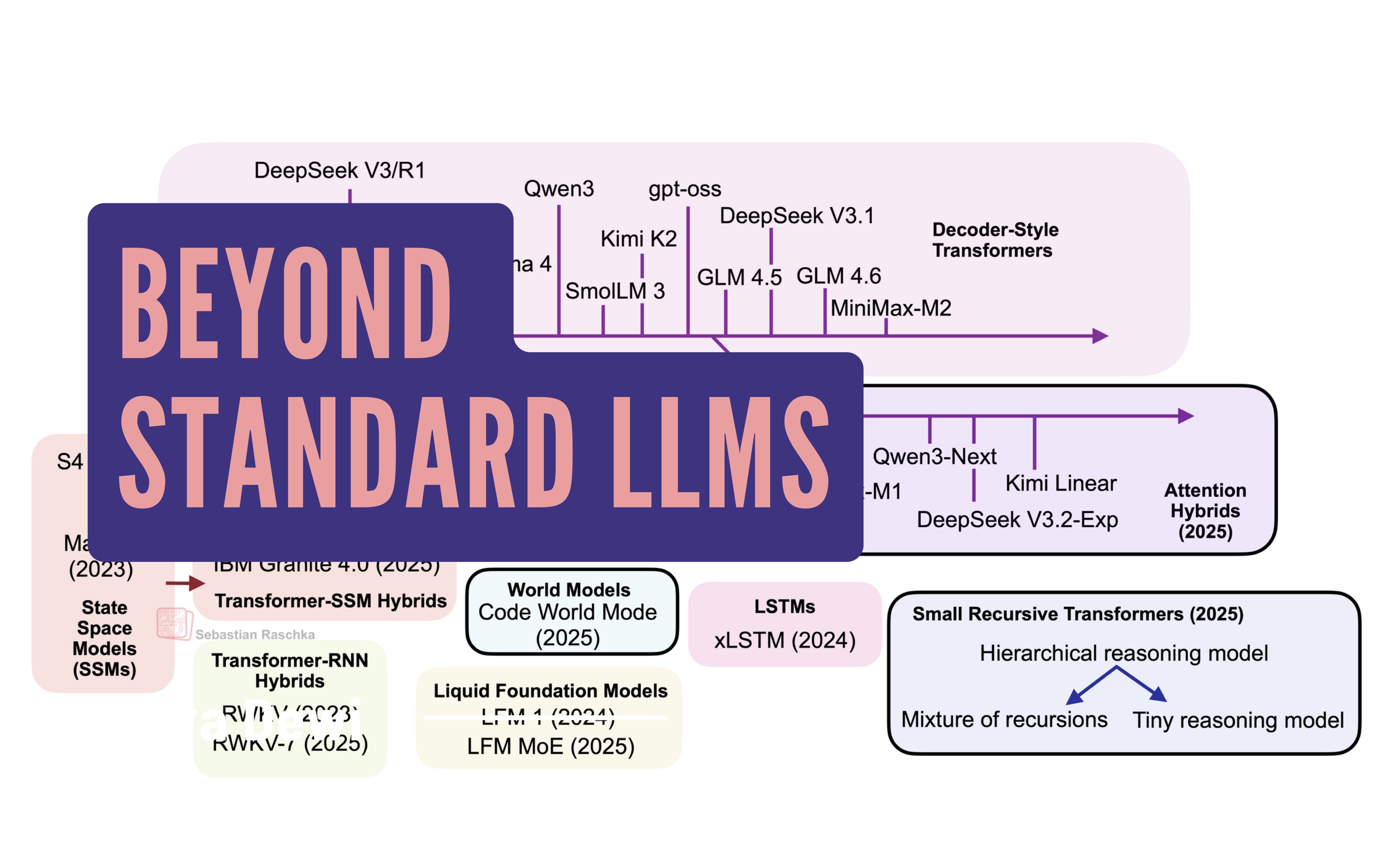

Sebastian Raschka's article "Beyond Standard LLMs" explores emerging alternatives to traditional autoregressive decoder-style transformer models. While these standard models, including recent open-weight releases like DeepSeek R1 and MiniMax-M2, still represent the state-of-the-art, Raschka highlights promising new directions. These include linear attention hybrids for improved efficiency and models like code world models aimed at enhancing performance, signaling a diversification in LLM architecture research. AI

Summary written by None from 1 source. How we write summaries →

RANK_REASON The article discusses alternative LLM architectures and mentions recent model releases as context.