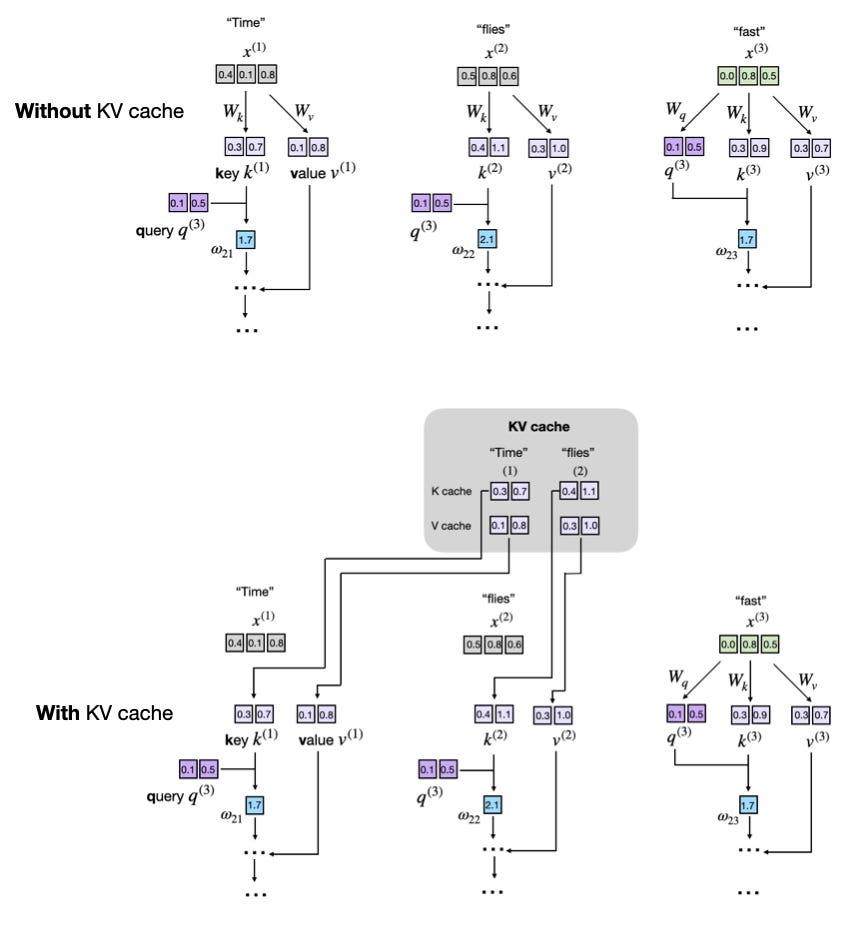

The KV cache is a crucial technique for optimizing the inference speed of Large Language Models (LLMs) in production environments. It works by storing and reusing intermediate key and value computations, thereby avoiding redundant calculations during text generation. While it increases memory requirements and code complexity, the significant inference speed-ups often make it a worthwhile trade-off for deploying LLMs. AI

Summary written by gemini-2.5-flash-lite from 2 sources. How we write summaries →

RANK_REASON This is a technical tutorial explaining a fundamental LLM concept with a code implementation.