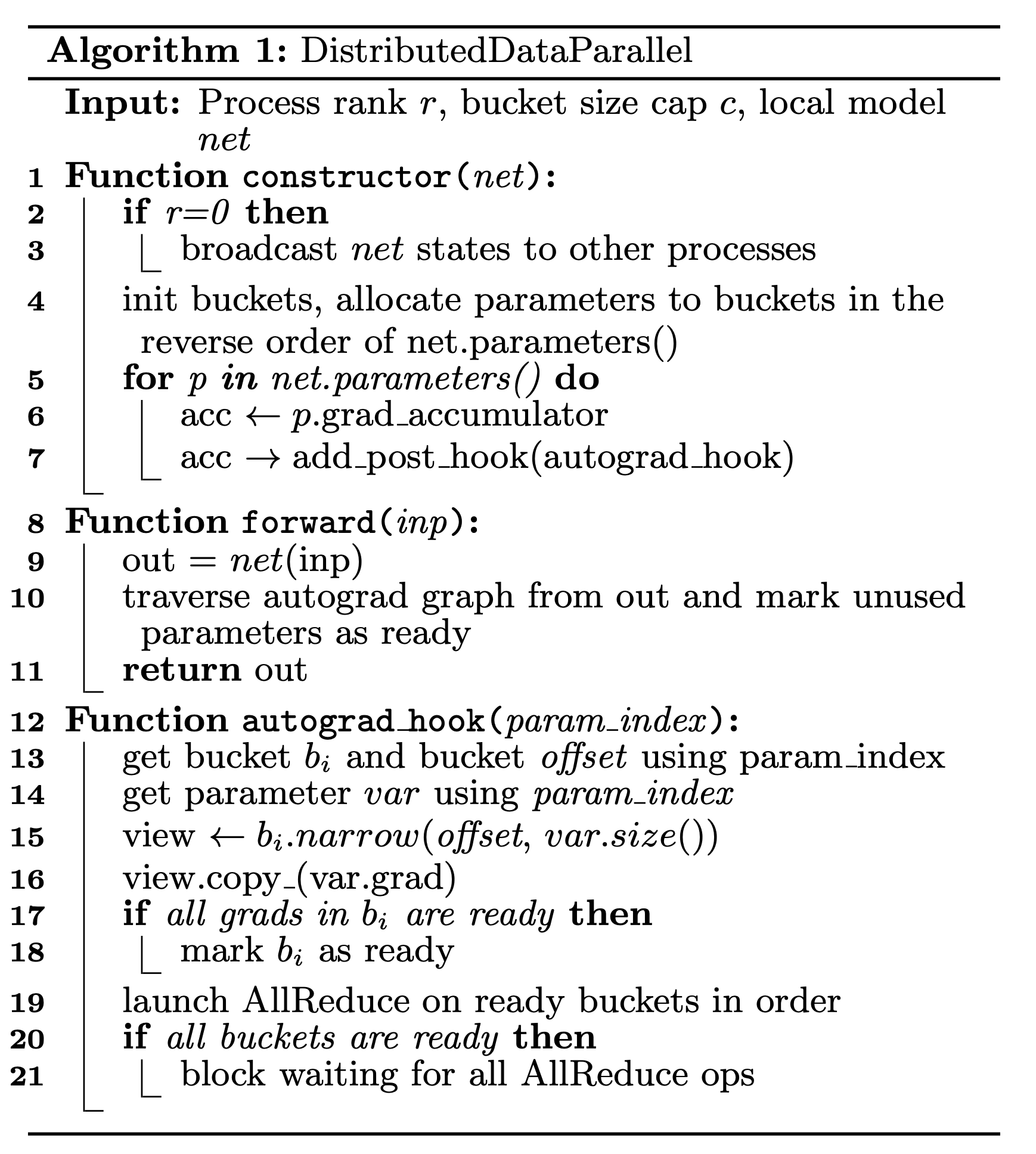

Training extremely large neural network models presents significant challenges due to their immense memory requirements and lengthy training times, often exceeding the capacity of individual GPUs. To address this, various parallelism techniques are employed, including data parallelism where models are replicated across multiple workers, and model parallelism where the model itself is partitioned across machines. Advanced methods like gradient accumulation and techniques to offload parameters to CPU memory are also utilized to optimize training efficiency and manage resource constraints. AI

Summary written by gemini-2.5-flash-lite from 2 sources. How we write summaries →

RANK_REASON The cluster discusses techniques for training large neural networks, referencing academic papers and concepts like data and model parallelism, fitting the research category.