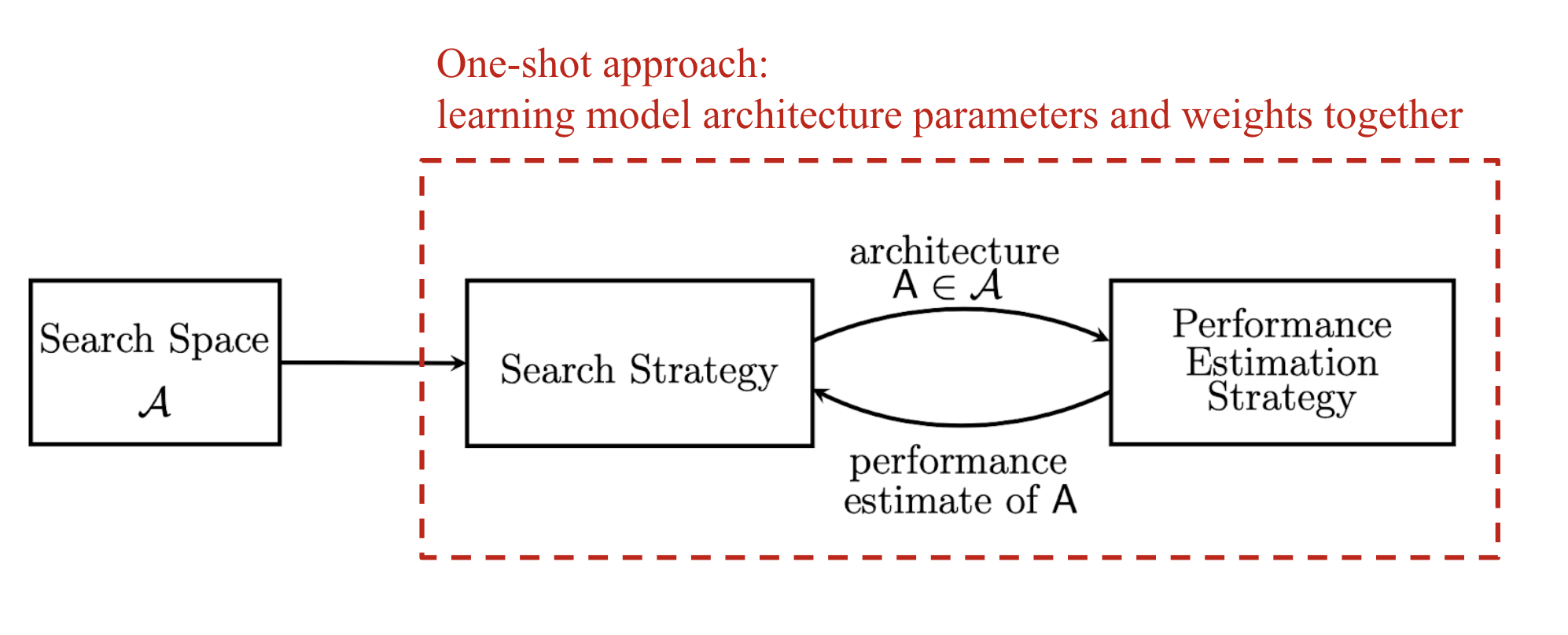

Neural Architecture Search (NAS) is a field focused on automating the design of high-performance neural network architectures. It typically involves three main components: a search space defining possible operations and connections, a search algorithm to sample candidate architectures, and an evaluation strategy to assess their performance. Early NAS methods, like those by Zoph & Le and Baker et al., used sequential layer-wise operations, which were computationally intensive, requiring hundreds of GPUs for extended periods. More recent approaches, inspired by successful modular designs, employ cell-based representations to improve efficiency. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

RANK_REASON The item is a blog post summarizing academic research papers on Neural Architecture Search.