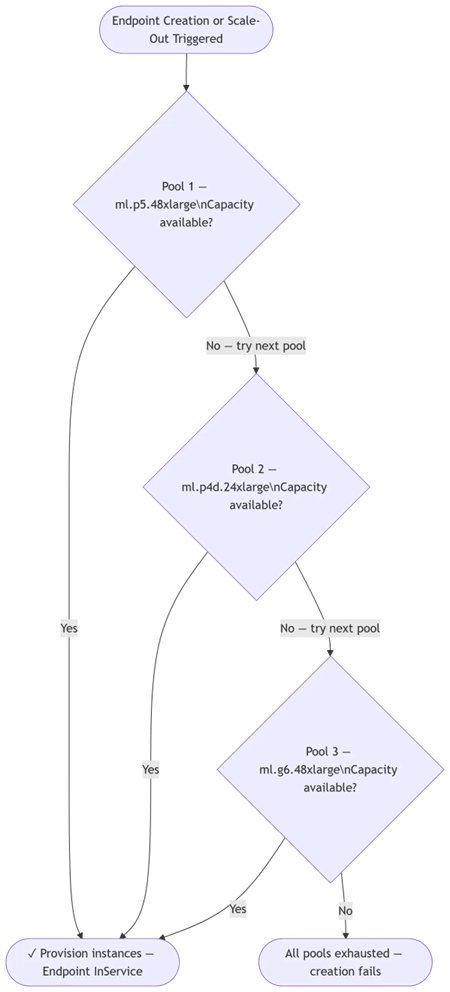

Amazon SageMaker has introduced a new feature called capacity-aware instance pools for AI inference endpoints. This enhancement allows users to define a prioritized list of instance types, enabling SageMaker to automatically select available infrastructure when preferred types are constrained. This capability aims to streamline the deployment and scaling of generative AI workloads by reducing manual intervention and improving reliability, especially for LLMs and multimodal models that require specific hardware. AI

Summary written by gemini-2.5-flash-lite from 2 sources. How we write summaries →

IMPACT Improves reliability and simplifies scaling for AI inference workloads on AWS.

RANK_REASON Product update for an existing cloud service.