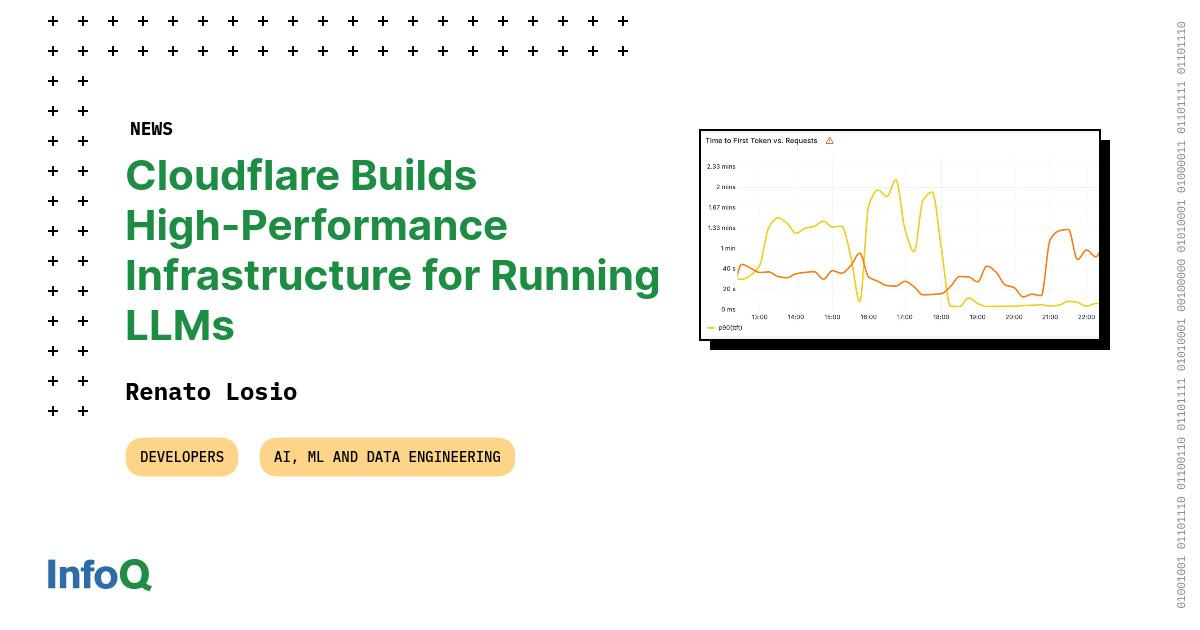

Cloudflare has introduced new infrastructure aimed at supporting AI applications, particularly large language models. The system leverages Cloudflare Workers and Durable Objects to manage state and compute at the edge, offering low latency and efficient processing. This approach splits AI model input processing and output generation across optimized systems to enhance efficiency and handle significant traffic demands. AI

Summary written by gemini-2.5-flash-lite from 3 sources. How we write summaries →

IMPACT This infrastructure aims to improve efficiency and manage high traffic demands for AI applications.

RANK_REASON Cloudflare announced new infrastructure for running AI models across its global network.