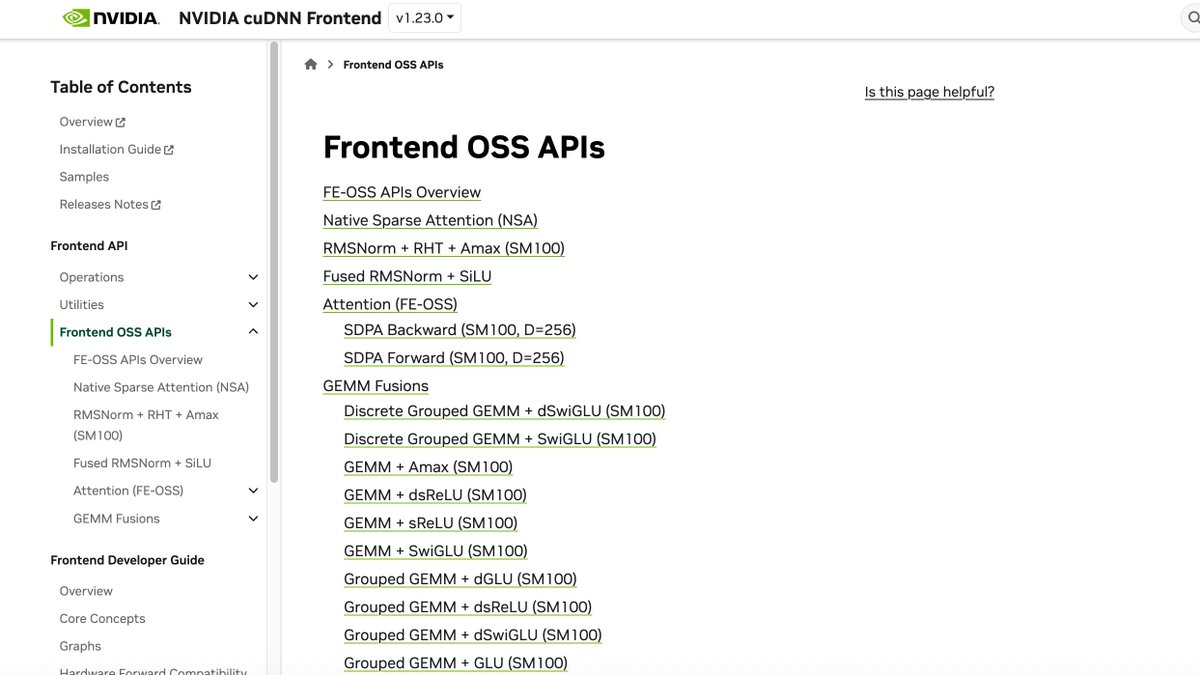

NVIDIA has open-sourced parts of its cuDNN library, a significant move after 12 years of it being closed-source. This release includes over 20 Mixture-of-Experts (MoE) kernels and NSA sparse attention kernels. The codebase for these kernels is largely written in Python CuTe-DSL, with public documentation now available. AI

Summary written by gemini-2.5-flash-lite from 3 sources. How we write summaries →

IMPACT Open-sourcing of cuDNN kernels could accelerate research and development in AI infrastructure and model optimization.

RANK_REASON Open-sourcing of a significant software library component by a major tech company.