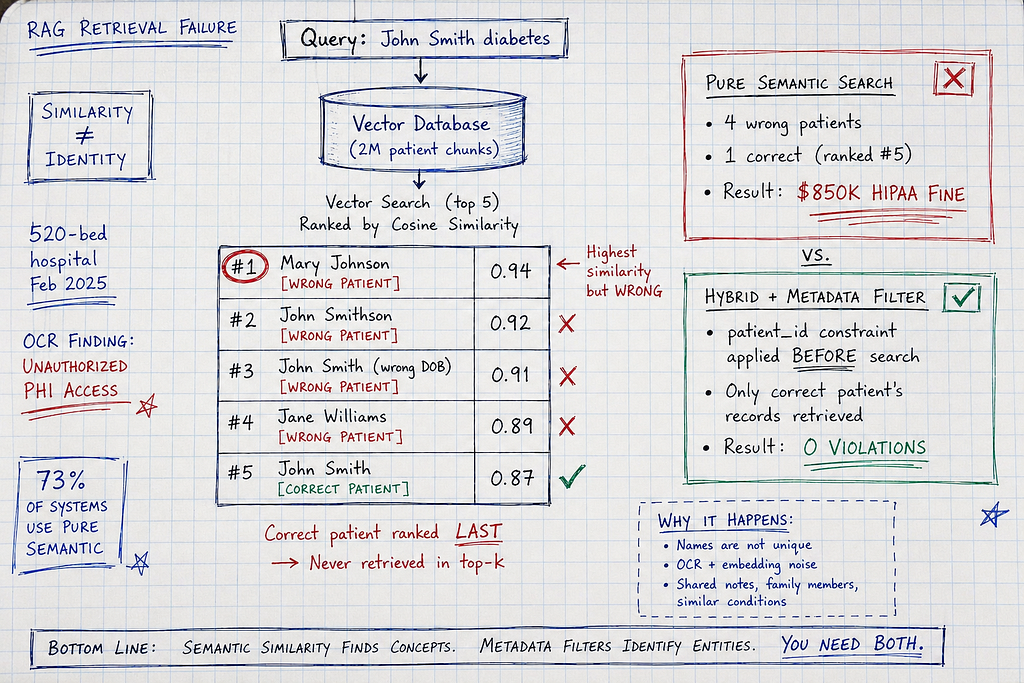

A healthcare AI system using Retrieval-Augmented Generation (RAG) mistakenly provided treatment recommendations for one patient to another due to similar names and medical terminology. The system, which used OpenAI's text-embedding-3-large model and Pinecone for its vector database, retrieved Mary Johnson's diabetes history for a query about John Smith. This error led to an $850,000 HIPAA violation and highlights the risks of pure semantic search in sensitive industries. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Highlights critical safety risks of RAG in healthcare, necessitating hybrid retrieval with metadata filtering to prevent patient data breaches.

RANK_REASON The article details a specific failure mode of RAG in healthcare, presenting a case study and analysis of retrieval errors. [lever_c_demoted from research: ic=1 ai=1.0]