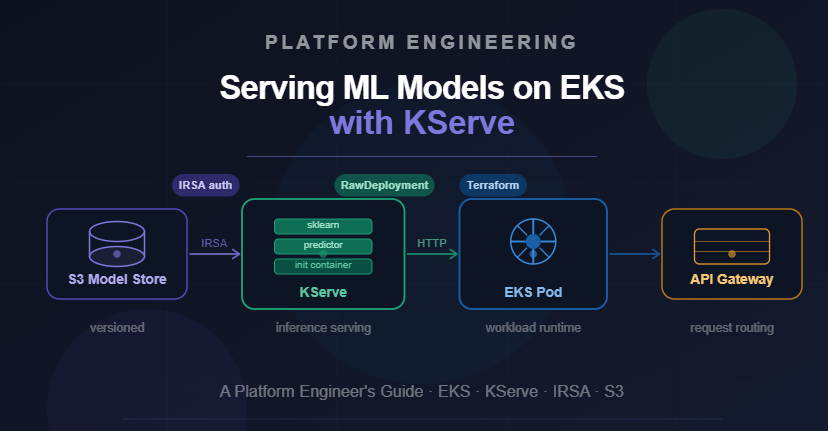

This guide details how platform engineers can effectively serve machine learning models on Amazon Elastic Kubernetes Service (EKS) using KServe. It provides a step-by-step approach to setting up the necessary infrastructure and configurations for robust ML model deployment. The article emphasizes best practices to ensure successful and efficient model serving within a Kubernetes environment. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Provides practical guidance for deploying and managing ML models in production environments using Kubernetes.

RANK_REASON The article is a technical guide for implementing a specific tool (KServe) on a platform (EKS) for a particular task (serving ML models), fitting the 'tool' category.