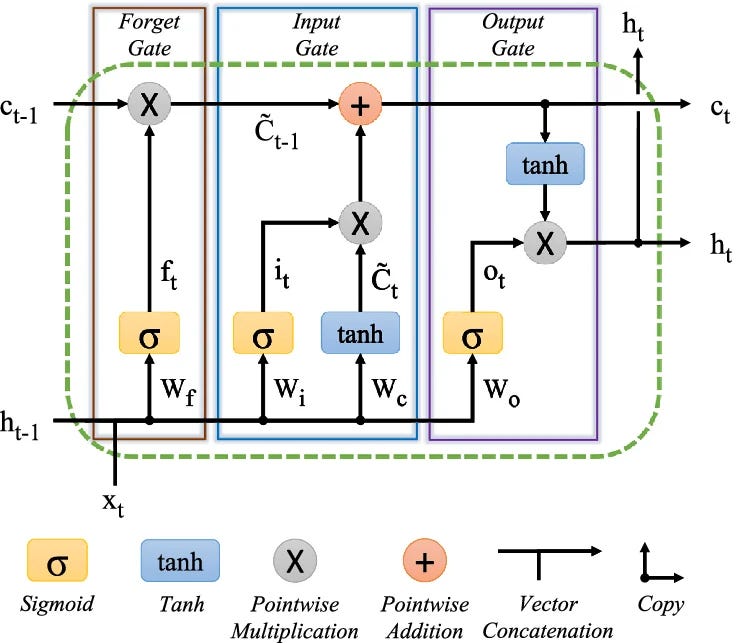

The Long Short-Term Memory (LSTM) network was developed to address the limitations of traditional Recurrent Neural Networks (RNNs) in handling sequential data. Vanilla RNNs struggle with remembering information over long periods due to the vanishing gradient problem during training. LSTMs introduce a more complex internal structure with gates that allow them to selectively remember or forget information, thereby overcoming the limitations of RNNs and improving performance on tasks like language modeling and time series forecasting. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Explains the core mechanism that enabled significant advances in sequence modeling, forming the basis for many modern NLP tasks.

RANK_REASON The article explains a foundational concept in machine learning research, detailing the architecture and purpose of LSTMs in relation to RNNs. [lever_c_demoted from research: ic=1 ai=1.0]