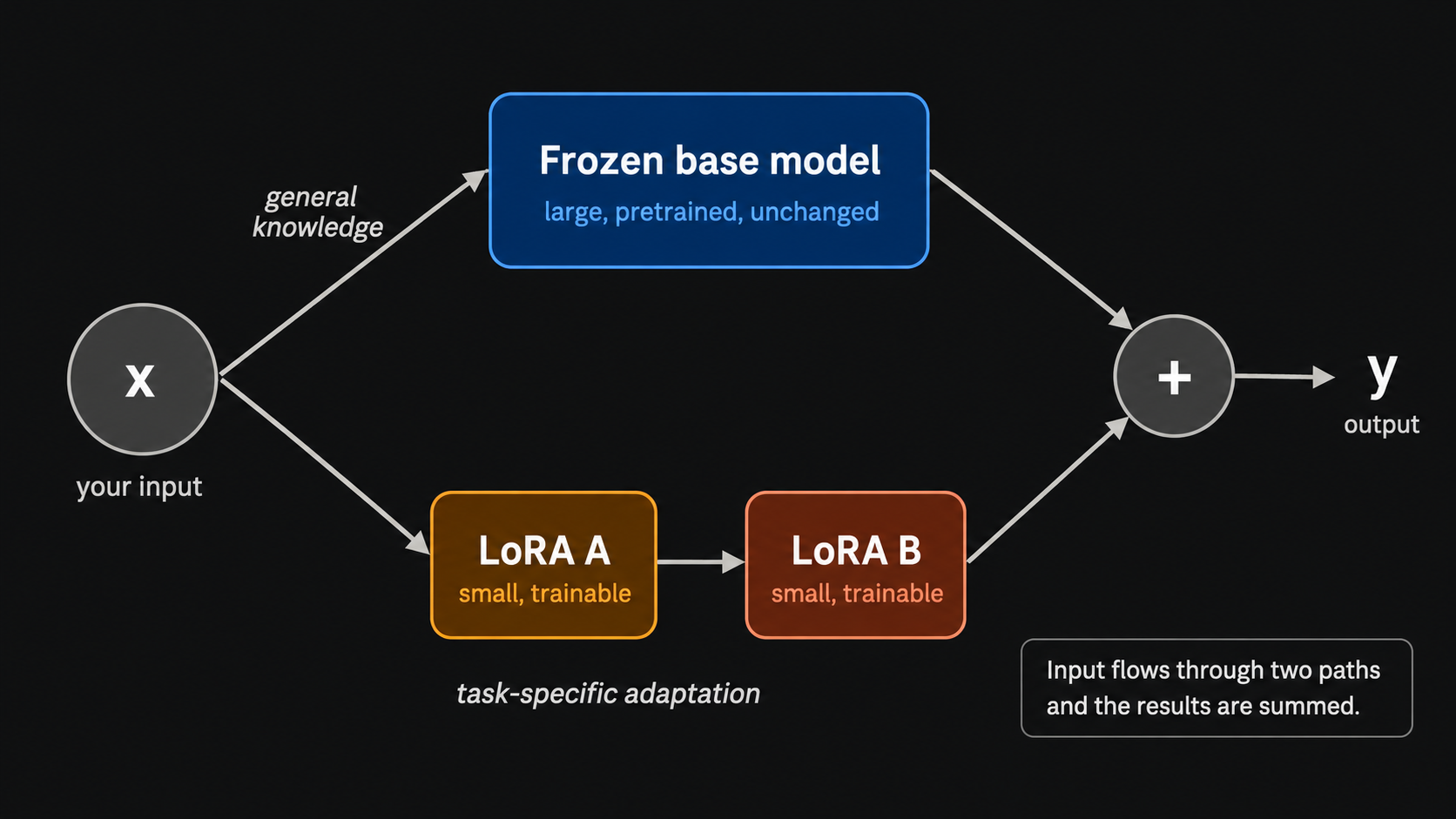

This article provides a detailed, number-by-number explanation of how LoRA (Low-Rank Adaptation) works for fine-tuning large language models. It aims to go beyond simply stating what LoRA achieves and instead illustrates the underlying matrix operations that enable efficient model adaptation. The explanation is designed to offer a deeper, more intuitive understanding of the fine-tuning process. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Provides a deep dive into LoRA, a key technique for efficient model fine-tuning, aiding practitioners in understanding and applying it.

RANK_REASON The article explains a specific technique (LoRA) used in machine learning model fine-tuning, akin to a technical paper or tutorial. [lever_c_demoted from research: ic=1 ai=1.0]