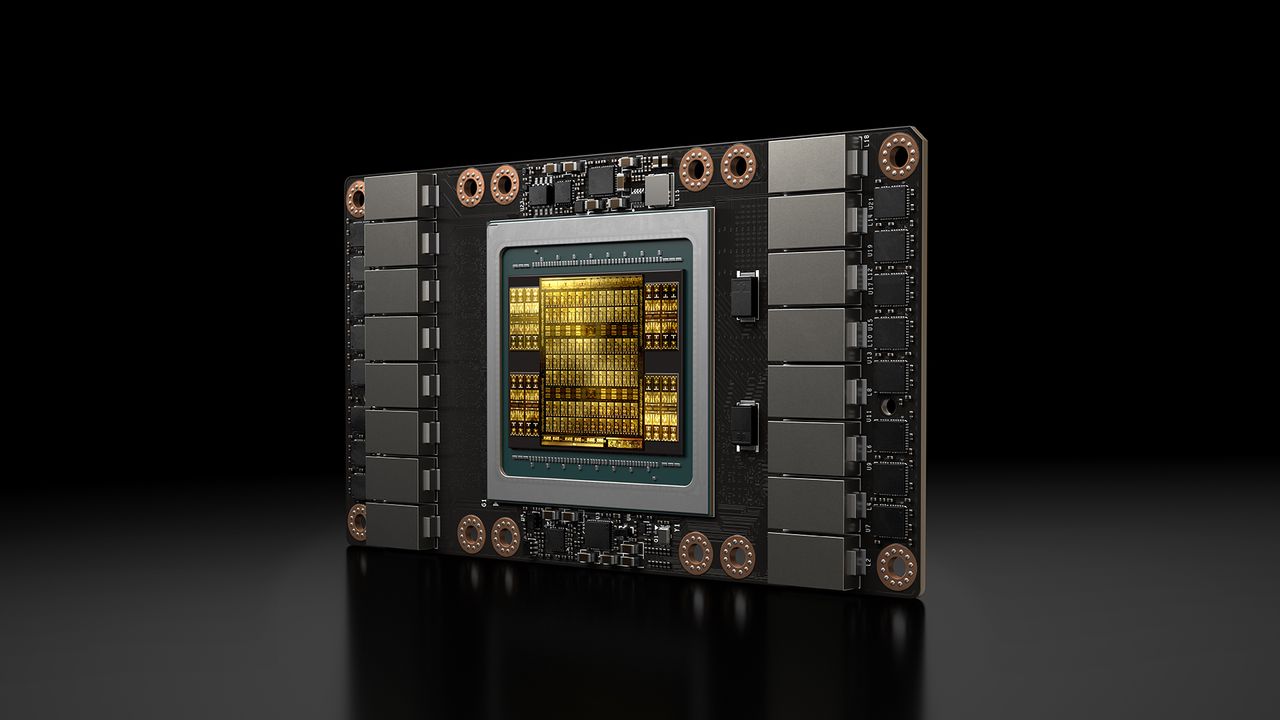

A YouTuber successfully adapted an Nvidia Tesla V100 server GPU, originally designed for specialized sockets, into a standard PCIe card for consumer motherboards. This modification, costing around $200, allows the older Turing-architecture GPU to run large language models efficiently. In tests, the V100 outperformed newer cards like the RTX 3060 and RX 7800 XT in terms of tokens per second for AI inference, and demonstrated superior power efficiency when power-limited. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Demonstrates that older, repurposed server hardware can offer competitive AI inference performance and efficiency, potentially lowering costs for AI operators.

RANK_REASON This is a hardware modification and repurposing of existing hardware, not a new product release from a manufacturer.