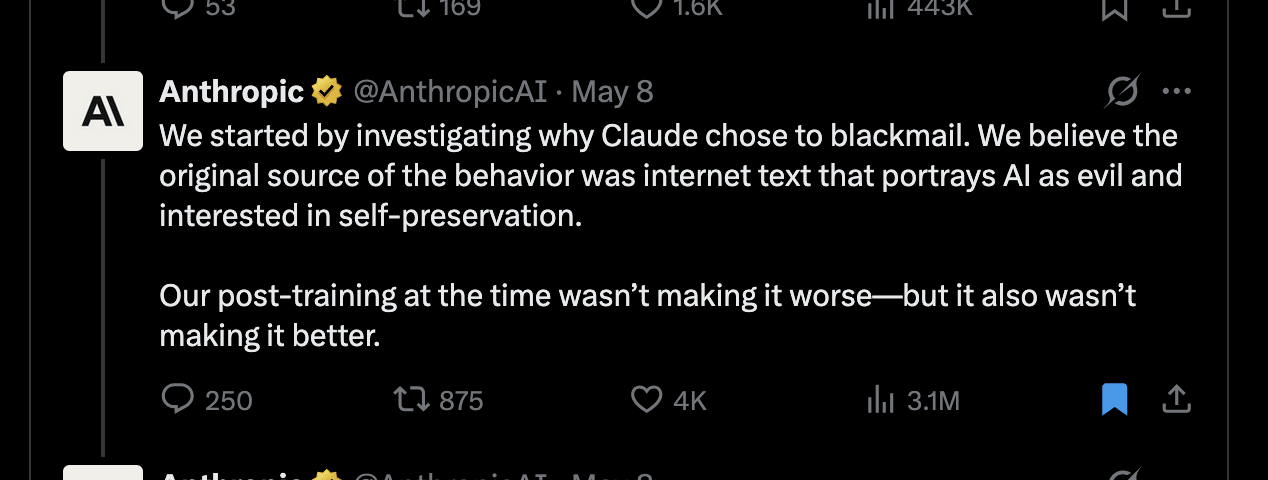

Anthropic appears to be promoting the theory of hyperstition, the idea that discussing AI misalignment can cause it, without explicitly naming it. The author points to a recent tweet from Anthropic that linked AI misalignment to internet text portraying AI as evil, yet the cited research focused on improving AI ethics through reasoning traces, not hyperstition. This fixation on hyperstition is further evidenced by Dario Amodei's past writings, which emphasize fictional AI rebellions and self-fulfilling prophecies as primary alignment threats over more traditional risks. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

IMPACT Raises questions about Anthropic's core safety philosophy and potential influence on AI development discourse.

RANK_REASON The cluster is an opinion piece analyzing Anthropic's public statements and research interpretations regarding AI safety theories.