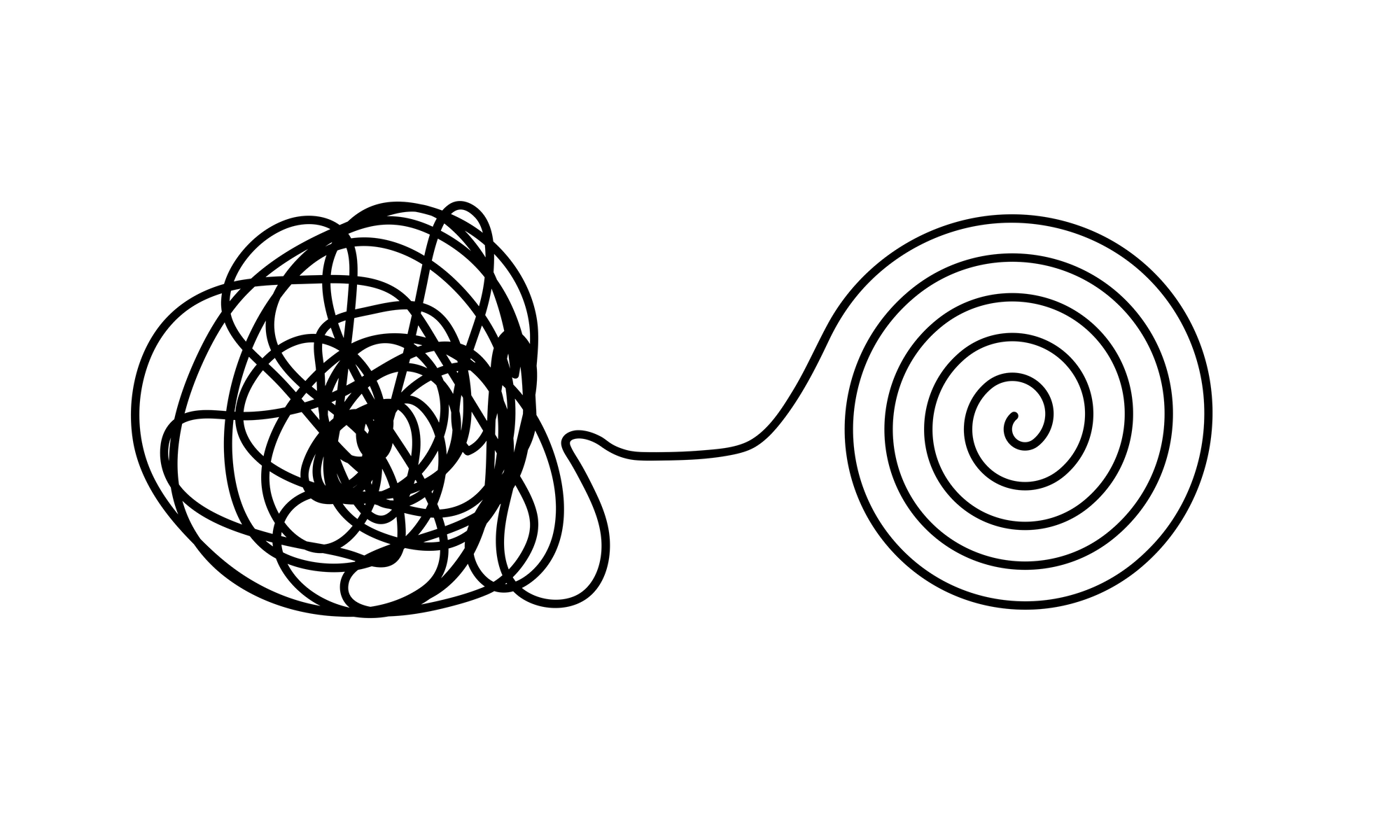

A recent article in The Gradient discusses common pitfalls in machine learning model development, particularly how models can appear effective during training but fail in real-world applications. These failures often stem from misleading training data, including hidden variables and spurious correlations, which cause models to learn irrelevant patterns instead of genuine predictive features. The author highlights examples like COVID-19 prediction models that learned patient posture instead of disease indicators, and a water quality system that gave false safety assurances. The piece suggests that these issues contribute to a reproducibility crisis in scientific research that relies on machine learning. AI

Summary written by gemini-2.5-flash-lite from 1 source. How we write summaries →

RANK_REASON The article is an opinion piece discussing general issues in machine learning model development and reproducibility, rather than a specific release or event.