Algorithmic Perfection

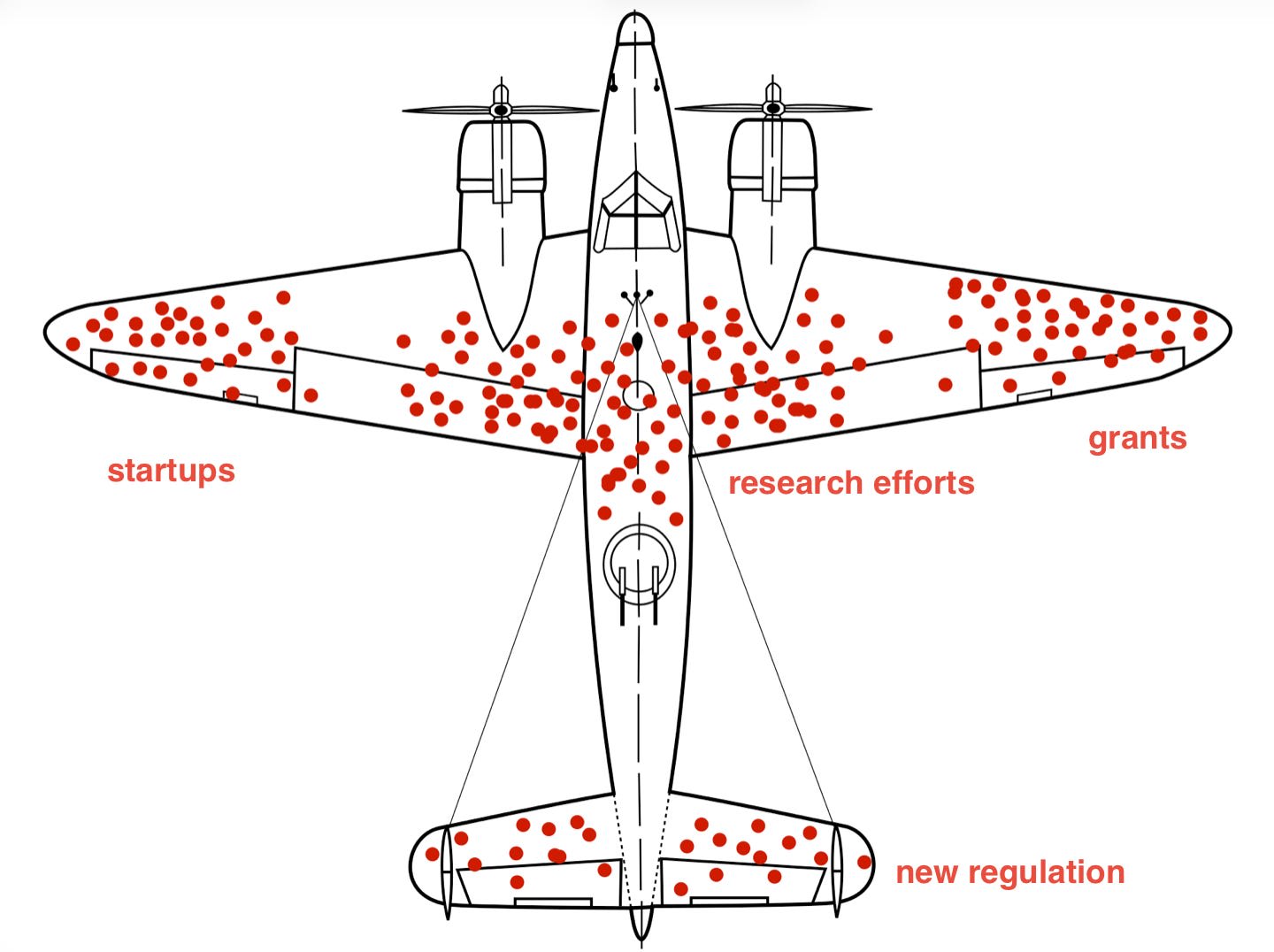

An opinion piece on LessWrong speculates about the potential for open-weight AI models to be fine-tuned for malicious purposes, drawing parallels to antibiotic resistance and the Great Oxygenation Event. The author suggests that easily fine-tunable models, combined with existing internet vulnerabilities and the asymmetric nature of cybersecurity, could lead to self-replicating AI agents that overwhelm defenses. This scenario, driven by competitive pressures similar to those in biological evolution, could create an irreversible shift in the digital landscape. AI

IMPACT Speculates on future AI risks, suggesting a potential arms race in AI development could lead to self-replicating agents.