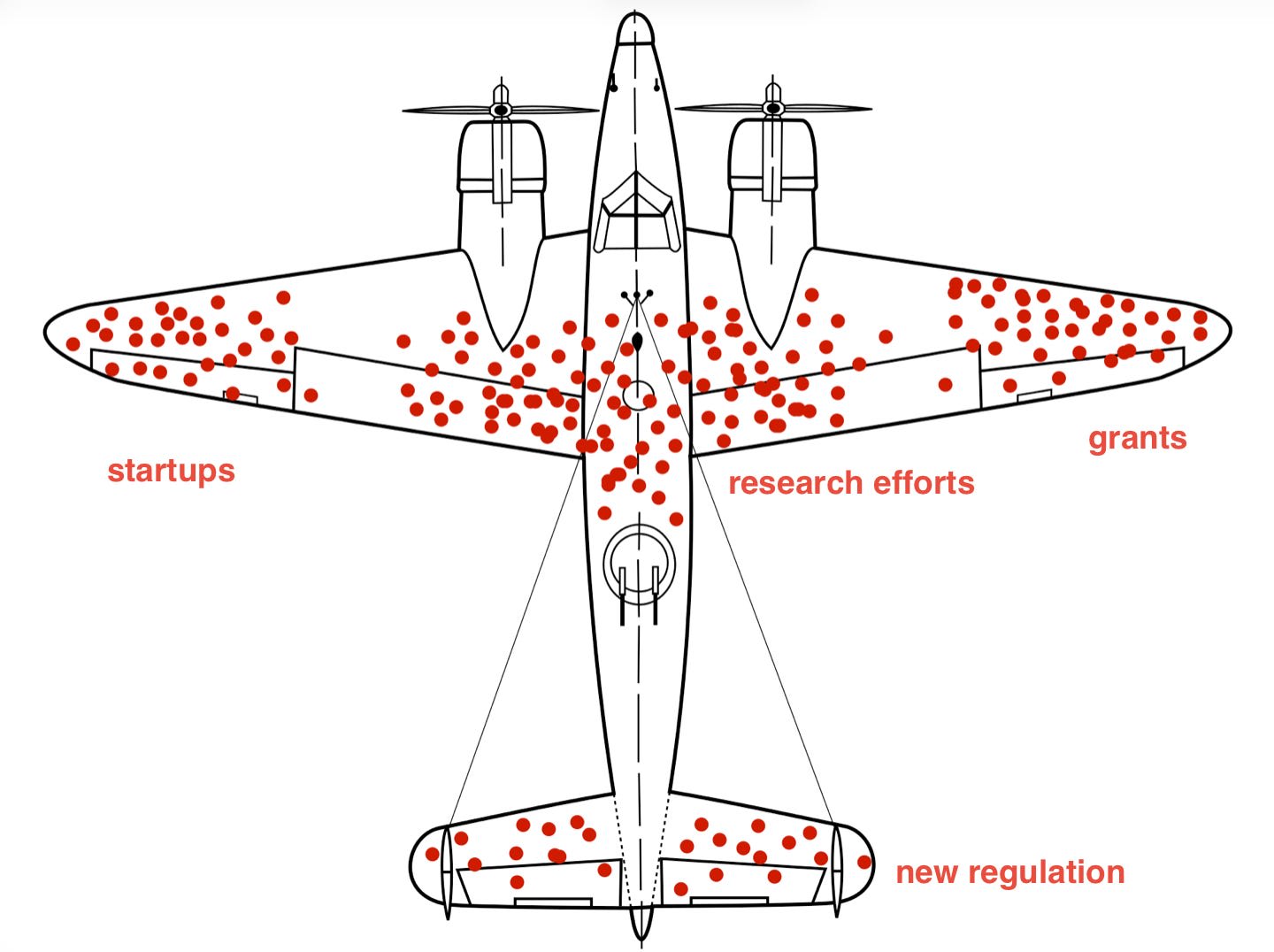

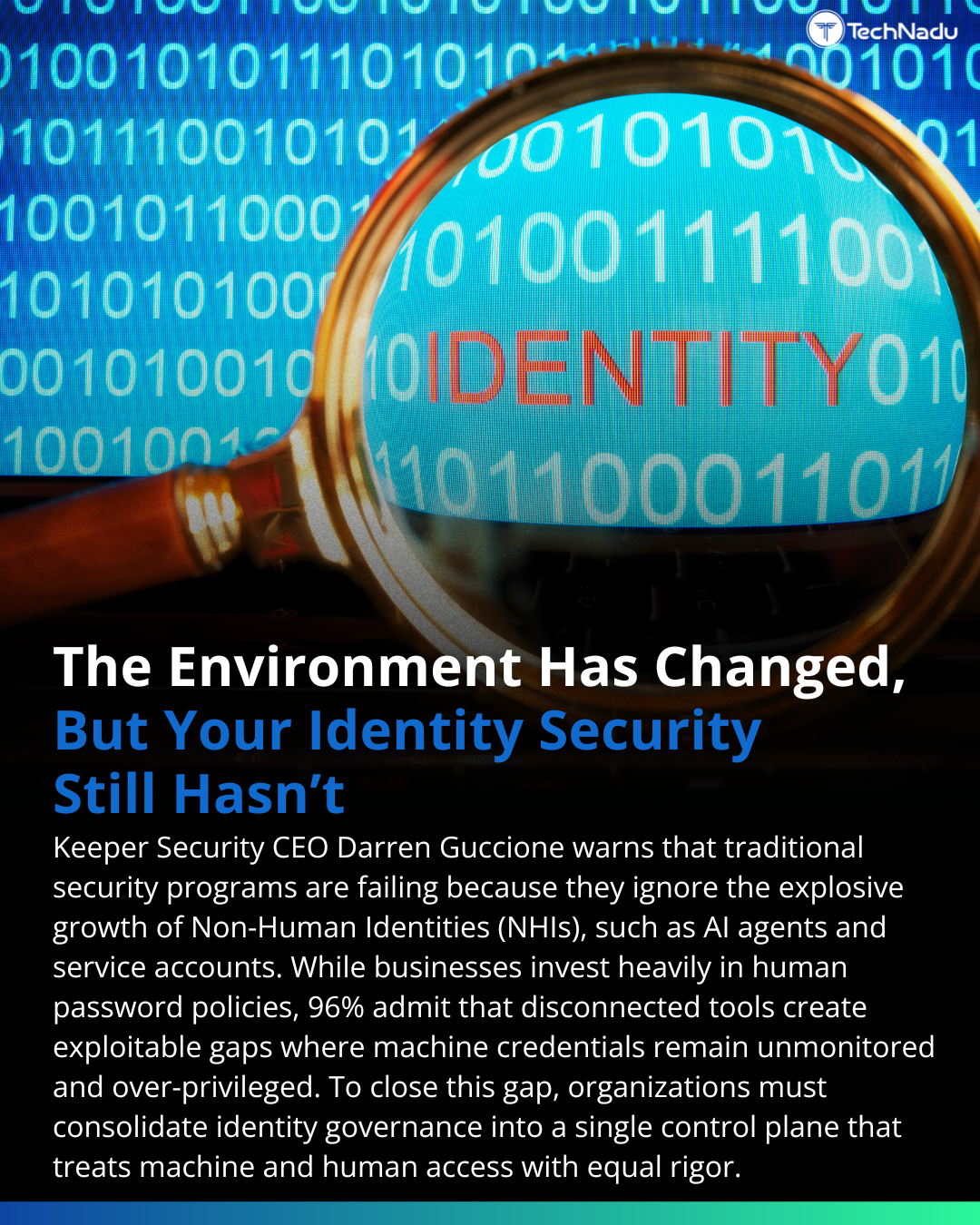

Identity security programs were built for human users - but AI agents, APIs, and service accounts are now expanding the attack surface at machine speed. New ins

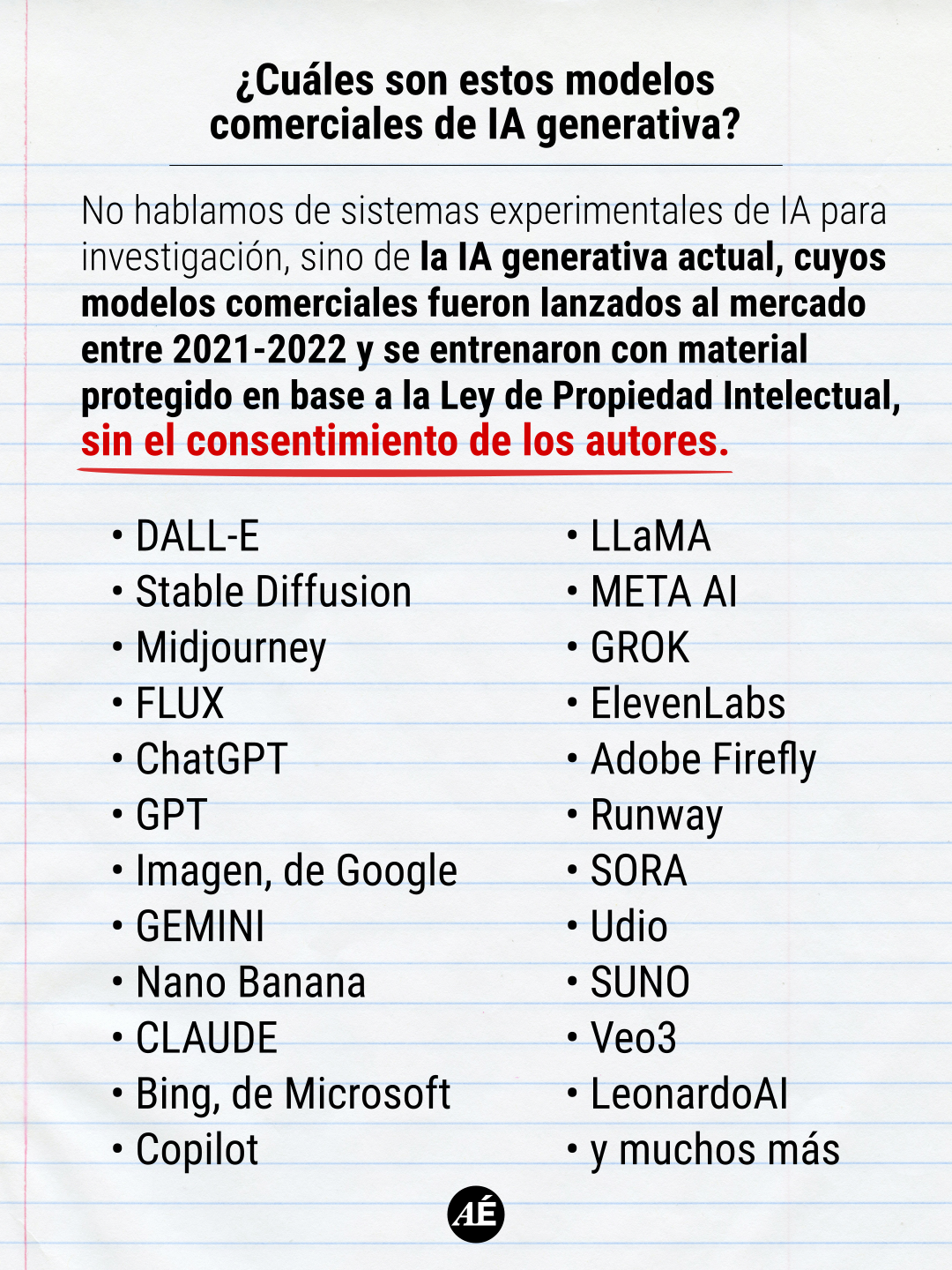

AI agents and APIs are significantly increasing the attack surface for identity security, moving beyond traditional human-user focused programs. Keeper Security CEO Darren Guccione highlights that current identity security measures have not kept pace with these advancements. This shift necessitates a re-evaluation of security strategies to address machine-speed threats. AI

IMPACT Highlights the evolving security challenges posed by AI agents and APIs, requiring updated strategies for identity protection.