Listen to Today's Qiita Trend Articles on a Podcast! 2026/05/09 https://qiita.com/ennagara128/items/45b8df4dd526c2273053?utm_campaign=popular_items&utm_medium=feed&utm_source=popular_

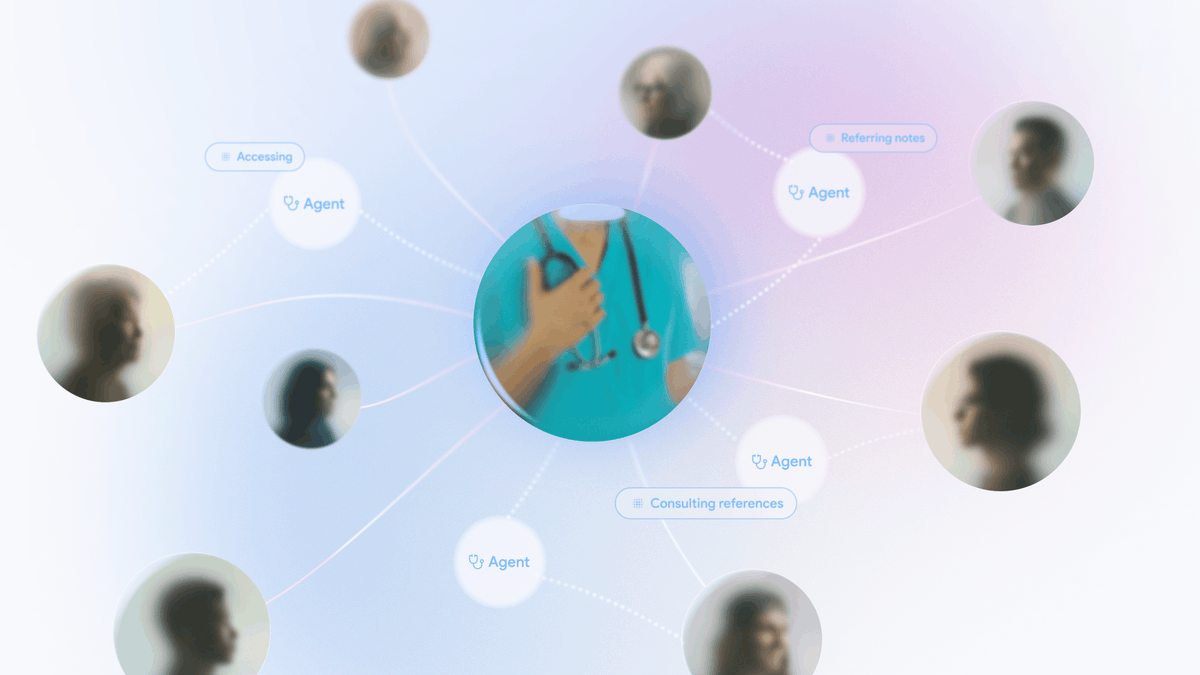

A new open-source multi-agent framework has been developed to automatically elevate individual tacit knowledge into organizational knowledge. This framework, released under the Apache 2.0 license, aims to streamline the process of knowledge sharing and utilization within organizations. The project is built using Python and focuses on generative AI capabilities. AI

IMPACT Provides a new open-source tool for organizations to better manage and leverage internal knowledge using AI agents.