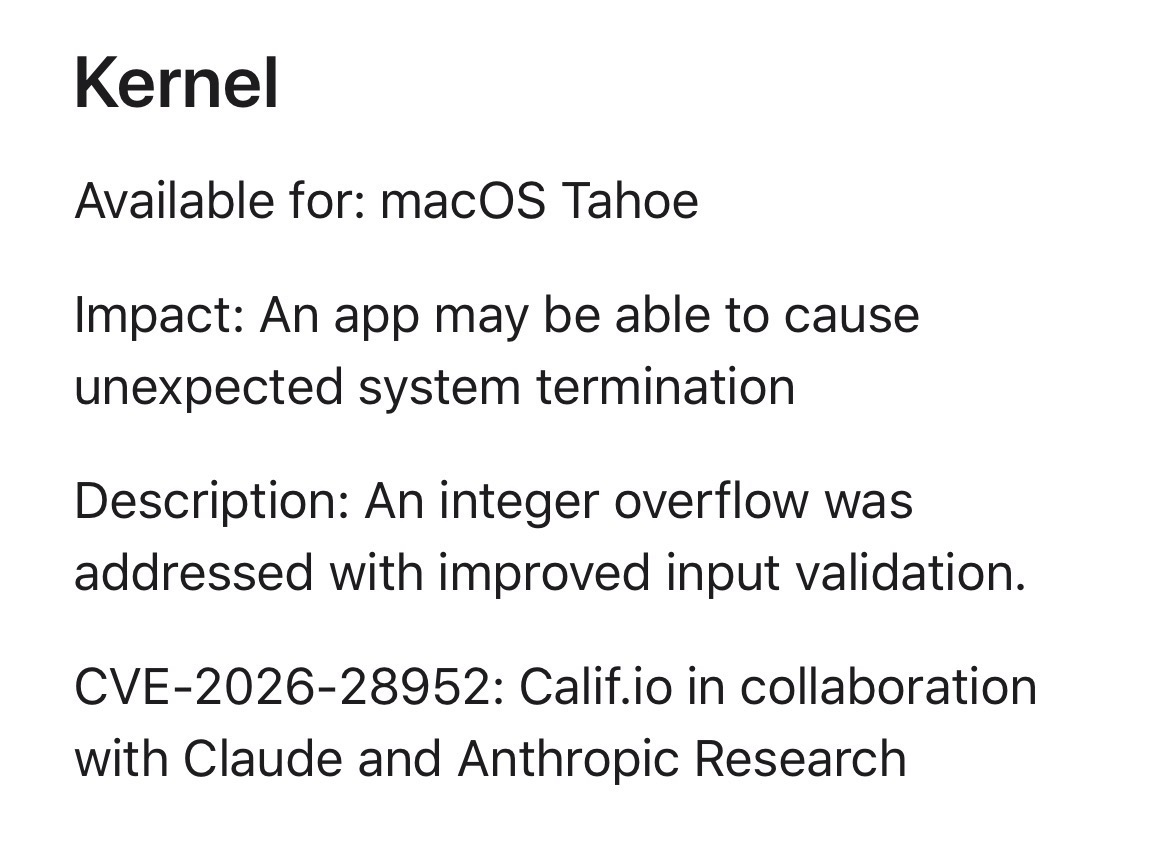

"the use of LLMs has become common in the literature review workflow, these tools do not replace the necessity for rigorous human oversight and authorial respon

The use of large language models (LLMs) is now widespread in the process of conducting literature reviews. However, these tools cannot substitute for careful human supervision and accountability from authors. Fabricating citations, whether directly or through an automated system, constitutes a significant ethical violation. AI

IMPACT Highlights the ongoing need for human judgment and ethical standards when integrating AI tools into academic workflows.