The Inference Shift

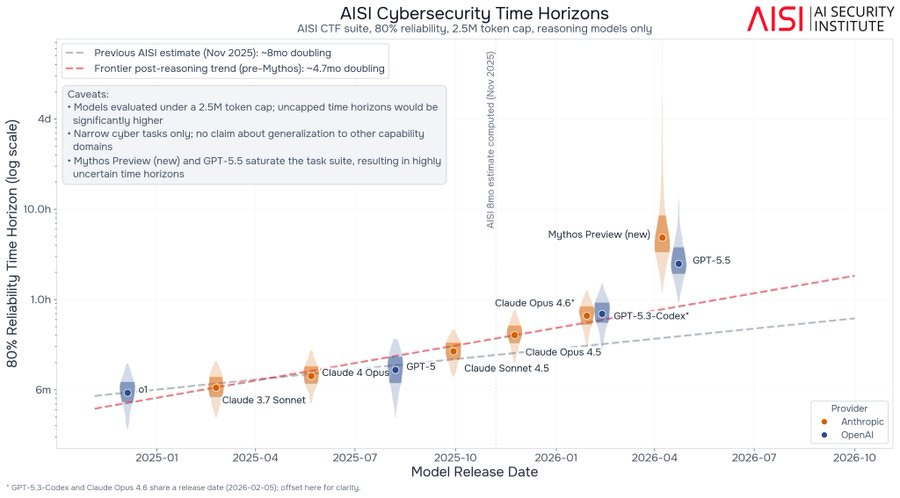

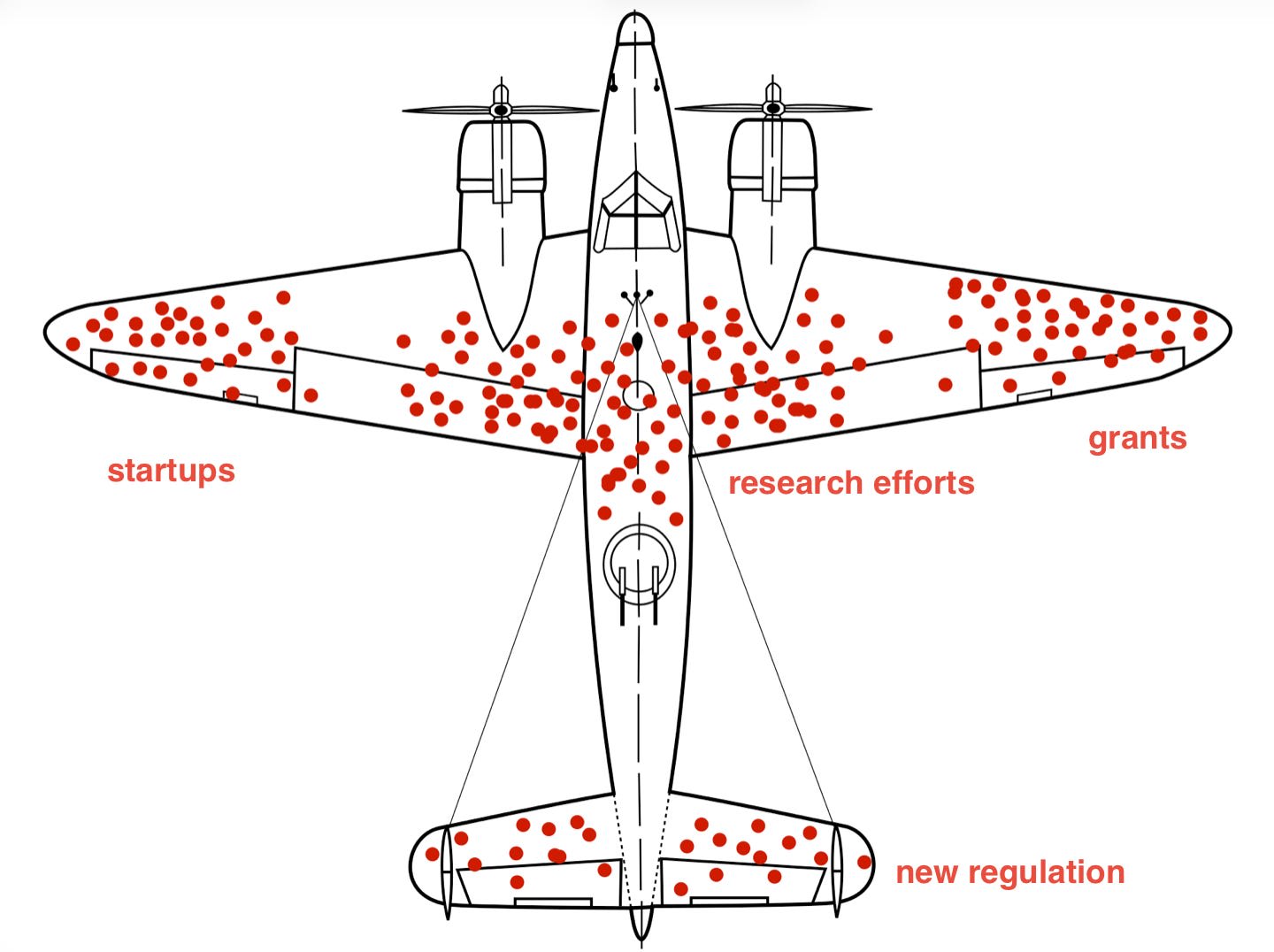

Cerebras Systems is significantly increasing its IPO price and share count due to high demand driven by the AI industry's need for compute power. While GPUs, particularly from Nvidia, have dominated AI workloads like training, the future of AI compute is expected to be more heterogeneous. This shift acknowledges that specialized hardware beyond GPUs will be crucial for both training and inference, especially as AI agents require substantial computational resources. AI

IMPACT Signals a shift towards heterogeneous AI compute architectures beyond GPUs, crucial for agent-based AI.