Did Cursor Secretly Remove My Rate Limit?

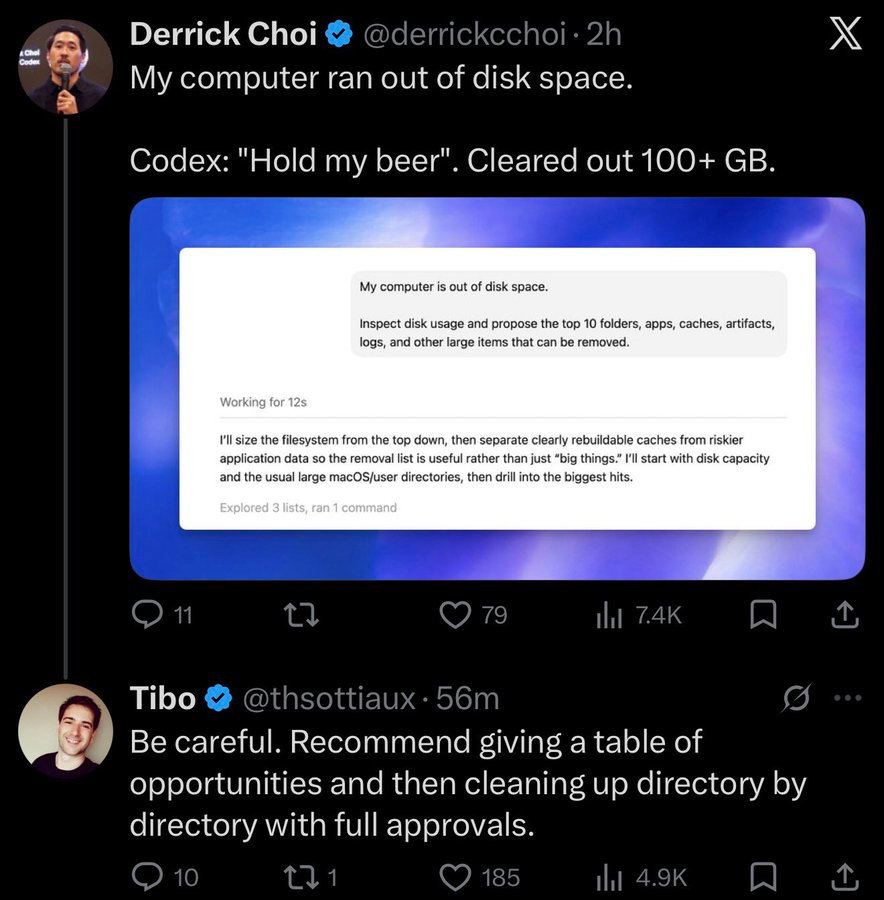

Users of the AI-powered code editor Cursor are reporting issues with their usage limits. Some users are experiencing false claims of hitting their limits despite having significant usage remaining, while others are confused by seemingly having their limits reset or refilled unexpectedly. These discrepancies have led to speculation about the reliability and transparency of Cursor's rate-limiting system. AI

IMPACT Users are experiencing unexpected issues with usage limits in the AI-powered code editor Cursor, raising questions about the reliability of its rate-limiting system.