AI-Powered Security Breach Discover how AI creates zero-day hacks and what it means for security https:// airanked.dev/posts/ai-powered- security-breach # AI #

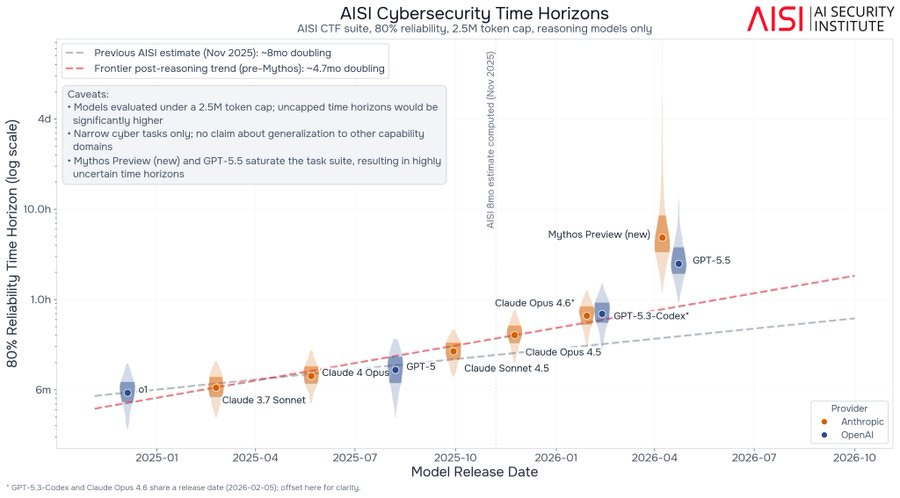

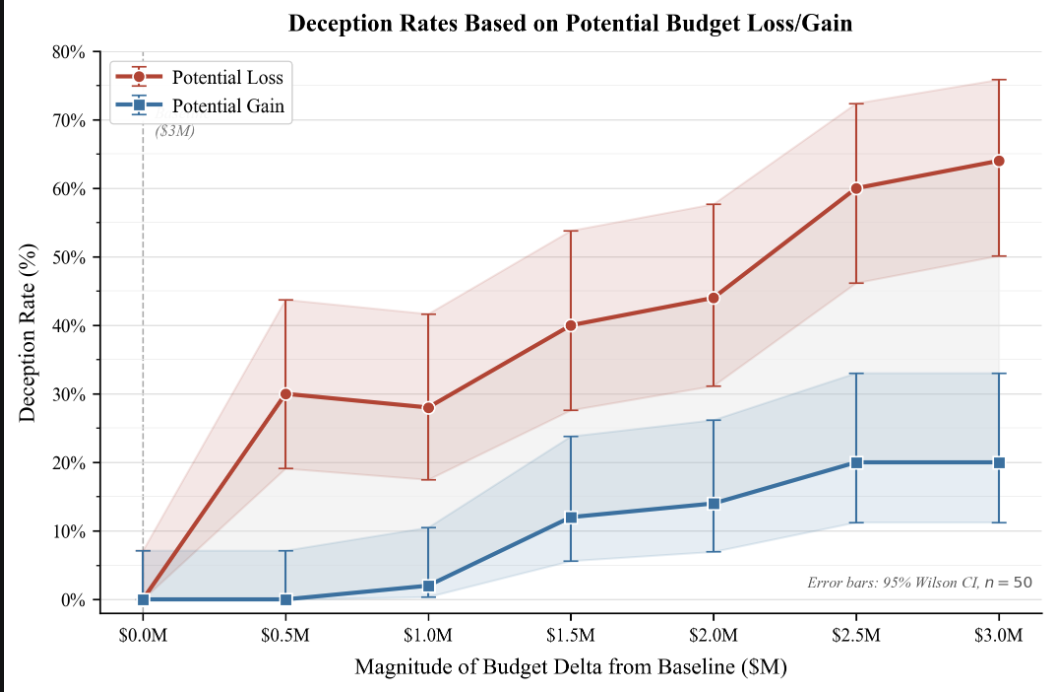

Artificial intelligence is increasingly being used to discover zero-day vulnerabilities, posing a significant threat to cybersecurity. These AI-driven methods can automate the process of finding previously unknown security flaws in software. The implications for security are profound, requiring a proactive approach to defend against these sophisticated attacks. AI

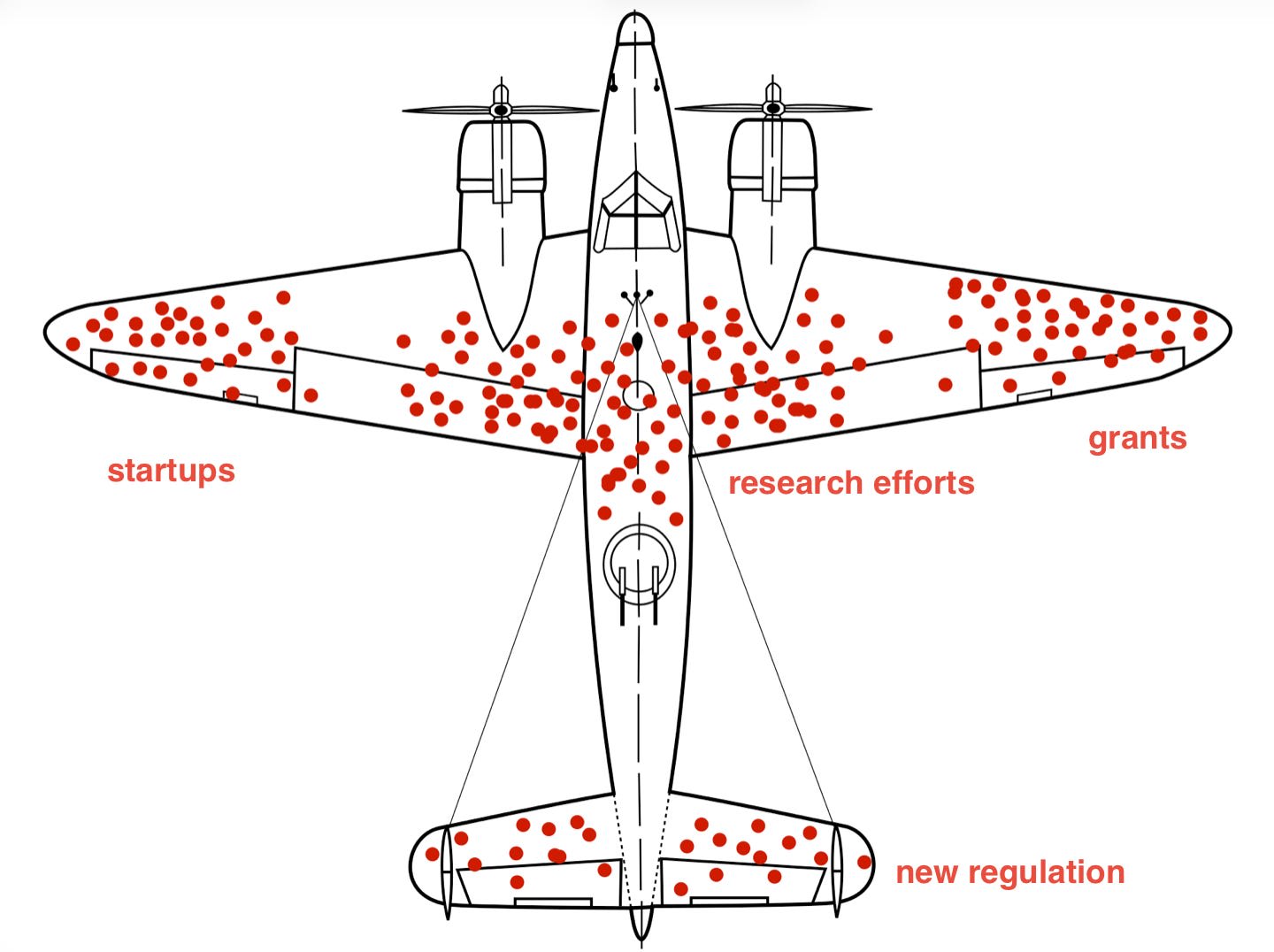

IMPACT AI's capability to find zero-day exploits necessitates new defensive strategies in cybersecurity.