Auditing Agent Harness Safety

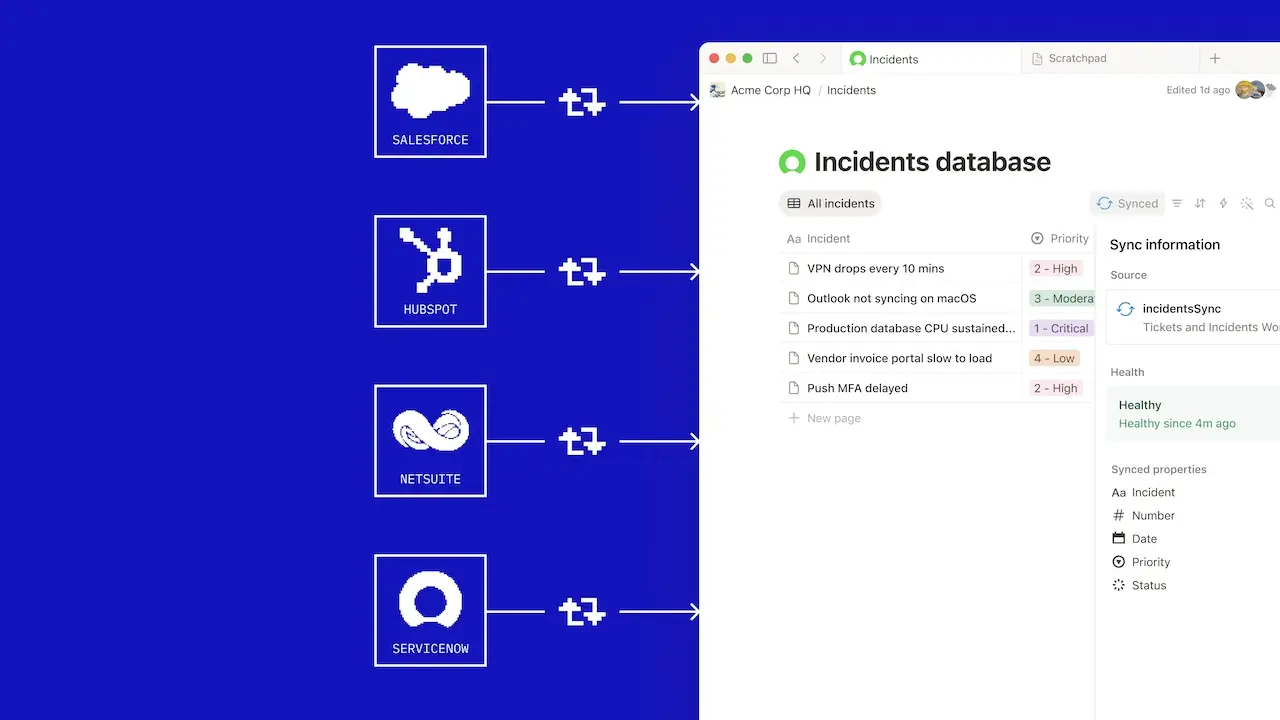

Researchers have introduced HarnessAudit, a new framework designed to evaluate the safety of execution harnesses used by large language model agents. These harnesses manage tool access, resource allocation, and inter-agent communication, but current safety benchmarks often overlook mid-trajectory violations. HarnessAudit focuses on auditing the entire execution path for compliance with user intent, permissions, and information flow, particularly in complex multi-agent systems. A new benchmark, HarnessAudit-Bench, comprising 210 tasks across eight domains, revealed that task completion does not correlate with safe execution, and safety risks escalate with trajectory length and inter-agent collaboration. AI

IMPACT Provides a method to ensure LLM agents adhere to safety constraints during execution, crucial for reliable deployment in complex applications.