Should you build or buy an MCP runtime for enterprise AI agents in 2026?

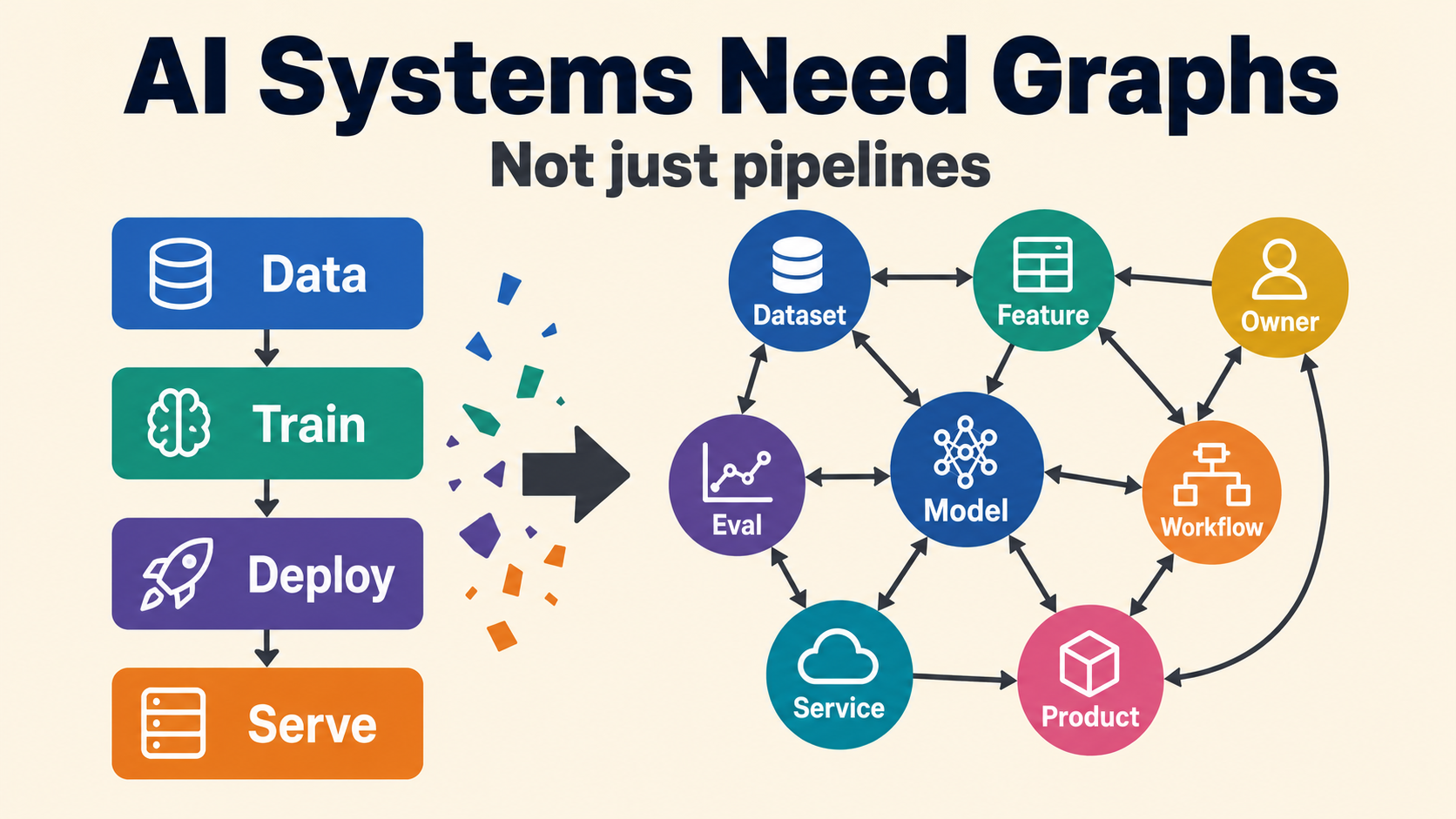

The article discusses the architectural decision enterprises face regarding AI agent runtimes in 2026, specifically whether to build or buy the necessary infrastructure. It highlights that the engineering bottleneck has shifted from agent development to securely integrating these agents into enterprise systems for widespread use. The decision hinges on whether to develop a custom runtime layer handling aspects like authorization, credential vaulting, and auditing, or to purchase an off-the-shelf solution. AI

IMPACT Guides enterprise AI strategy by outlining build vs. buy trade-offs for agent runtime infrastructure, impacting deployment costs and security.